Inferring Temporal Compositions of Actions Using Probabilistic Automata

Paper and Code

Apr 28, 2020

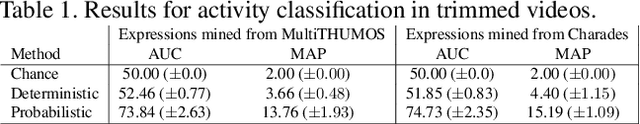

This paper presents a framework to recognize temporal compositions of atomic actions in videos. Specifically, we propose to express temporal compositions of actions as semantic regular expressions and derive an inference framework using probabilistic automata to recognize complex actions as satisfying these expressions on the input video features. Our approach is different from existing works that either predict long-range complex activities as unordered sets of atomic actions, or retrieve videos using natural language sentences. Instead, the proposed approach allows recognizing complex fine-grained activities using only pretrained action classifiers, without requiring any additional data, annotations or neural network training. To evaluate the potential of our approach, we provide experiments on synthetic datasets and challenging real action recognition datasets, such as MultiTHUMOS and Charades. We conclude that the proposed approach can extend state-of-the-art primitive action classifiers to vastly more complex activities without large performance degradation.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge