Improving 3D Object Detection for Pedestrians with Virtual Multi-View Synthesis Orientation Estimation

Paper and Code

Jul 15, 2019

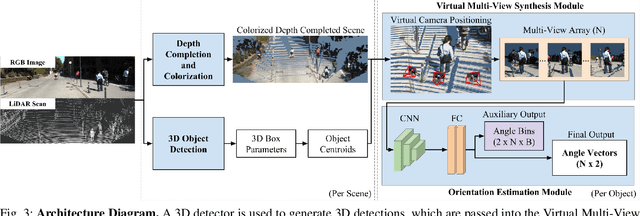

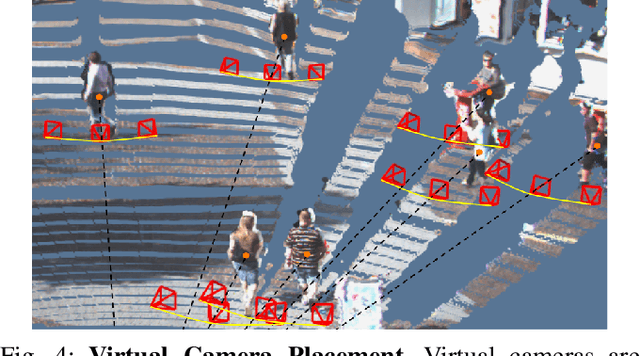

Accurately estimating the orientation of pedestrians is an important and challenging task for autonomous driving because this information is essential for tracking and predicting pedestrian behavior. This paper presents a flexible Virtual Multi-View Synthesis module that can be adopted into 3D object detection methods to improve orientation estimation. The module uses a multi-step process to acquire the fine-grained semantic information required for accurate orientation estimation. First, the scene's point cloud is densified using a structure preserving depth completion algorithm and each point is colorized using its corresponding RGB pixel. Next, virtual cameras are placed around each object in the densified point cloud to generate novel viewpoints, which preserve the object's appearance. We show that this module greatly improves the orientation estimation on the challenging pedestrian class on the KITTI benchmark. When used with the open-source 3D detector AVOD-FPN, we outperform all other published methods on the pedestrian Orientation, 3D, and Bird's Eye View benchmarks.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge