Implicit Shape Completion via Adversarial Shape Priors

Paper and Code

Apr 21, 2022

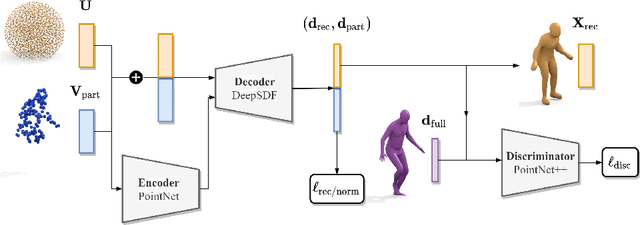

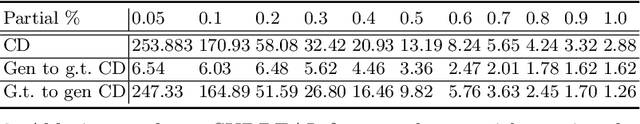

We present a novel neural implicit shape method for partial point cloud completion. To that end, we combine a conditional Deep-SDF architecture with learned, adversarial shape priors. More specifically, our network converts partial inputs into a global latent code and then recovers the full geometry via an implicit, signed distance generator. Additionally, we train a PointNet++ discriminator that impels the generator to produce plausible, globally consistent reconstructions. In that way, we effectively decouple the challenges of predicting shapes that are both realistic, i.e. imitate the training set's pose distribution, and accurate in the sense that they replicate the partial input observations. In our experiments, we demonstrate state-of-the-art performance for completing partial shapes, considering both man-made objects (e.g. airplanes, chairs, ...) and deformable shape categories (human bodies). Finally, we show that our adversarial training approach leads to visually plausible reconstructions that are highly consistent in recovering missing parts of a given object.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge