Impedance Adaptation by Reinforcement Learning with Contact Dynamic Movement Primitives

Paper and Code

Mar 21, 2022

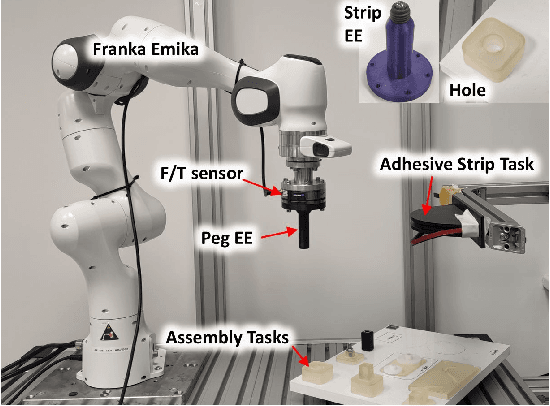

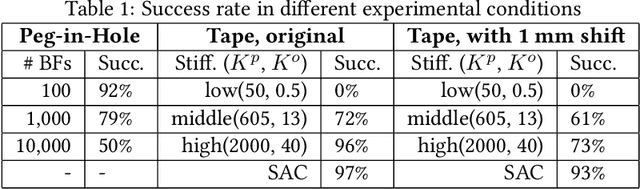

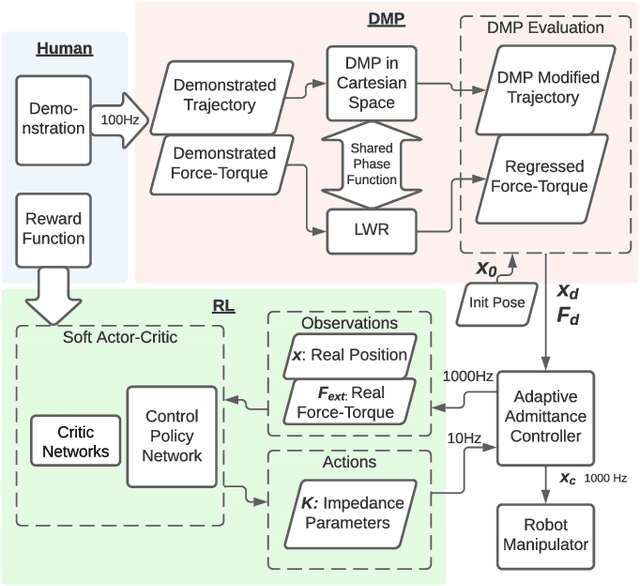

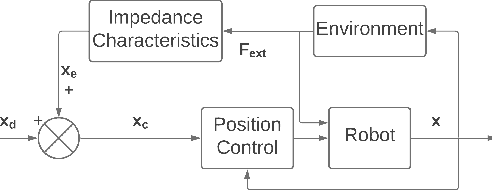

Dynamic movement primitives (DMPs) allow complex position trajectories to be efficiently demonstrated to a robot. In contact-rich tasks, where position trajectories alone may not be safe or robust over variation in contact geometry, DMPs have been extended to include force trajectories. However, different task phases or degrees of freedom may require the tracking of either position or force -- e.g., once contact is made, it may be more important to track the force demonstration trajectory in the contact direction. The robot impedance balances between following a position or force reference trajectory, where a high stiffness tracks position and a low stiffness tracks force. This paper proposes using DMPs to learn position and force trajectories from demonstrations, then adapting the impedance parameters online with a higher-level control policy trained by reinforcement learning. This allows one-shot demonstration of the task with DMPs, and improved robustness and performance from the impedance adaptation. The approach is validated on peg-in-hole and adhesive strip application tasks.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge