How Trustworthy are the Existing Performance Evaluations for Basic Vision Tasks?

Paper and Code

Aug 08, 2020

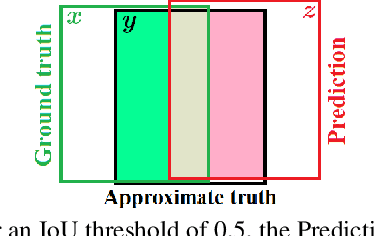

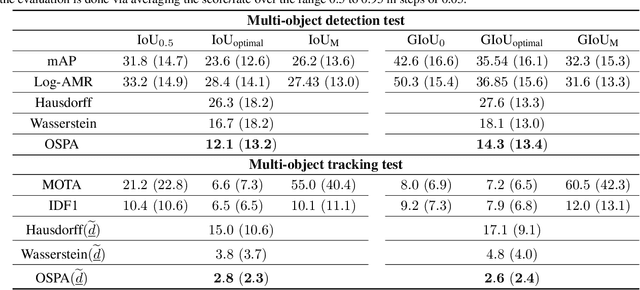

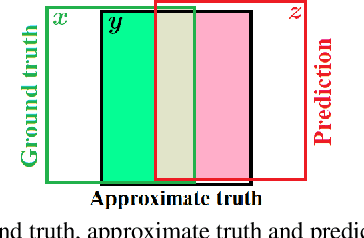

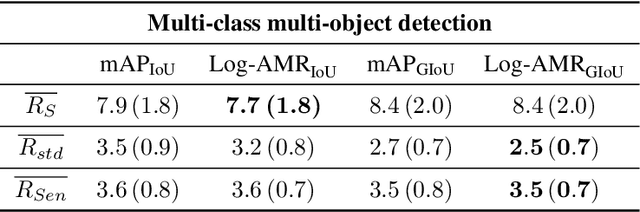

Performance evaluation is indispensable to the advancement of machine vision, yet its consistency and rigour have not received proportionate attention. This paper examines performance evaluation criteria for basic vision tasks namely, object detection, instance-level segmentation and multi-object tracking. Specifically, we advocate the use of criteria that are (i) consistent with mathematical requirements such as the metric properties, (ii) contextually meaningful in sanity tests, and (iii) robust to hyper-parameters for reliability. We show that many widely used performance criteria do not fulfill these requirements. Moreover, we explore alternative criteria for detection, segmentation, and tracking, using metrics for sets of shapes, and assess them against these requirements.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge