How Much Should I Trust You? Modeling Uncertainty of Black Box Explanations

Paper and Code

Aug 11, 2020

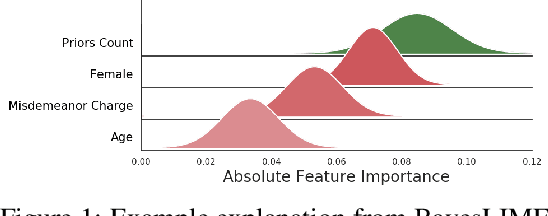

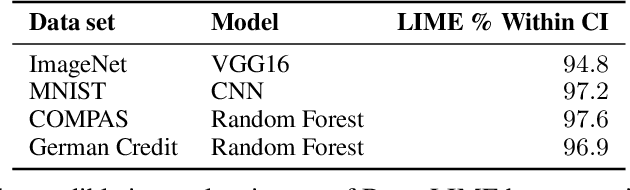

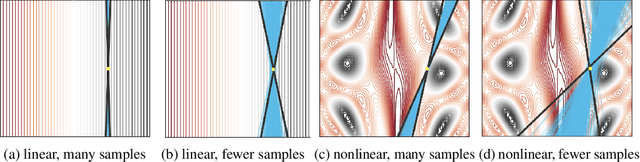

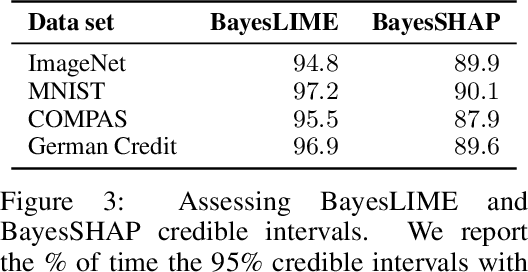

As local explanations of black box models are increasingly being employed to establish model credibility in high stakes settings, it is important to ensure that these explanations are accurate and reliable. However, local explanations generated by existing techniques are often prone to high variance. Further, these techniques are computationally inefficient, require significant hyper-parameter tuning, and provide little insight into the quality of the resulting explanations. By identifying lack of uncertainty modeling as the main cause of these challenges, we propose a novel Bayesian framework that produces explanations that go beyond point-wise estimates of feature importance. We instantiate this framework to generate Bayesian versions of LIME and KernelSHAP. In particular, we estimate credible intervals (CIs) that capture the uncertainty associated with each feature importance in local explanations. These credible intervals are tight when we have high confidence in the feature importances of a local explanation. The CIs are also informative both for estimating how many perturbations we need to sample -- sampling can proceed until the CIs are sufficiently narrow -- and where to sample -- sampling in regions with high predictive uncertainty leads to faster convergence. Experimental evaluation with multiple real world datasets and user studies demonstrate the efficacy of our framework and the resulting explanations.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge