Hierarchical Scene Parsing by Weakly Supervised Learning with Image Descriptions

Paper and Code

Jan 27, 2018

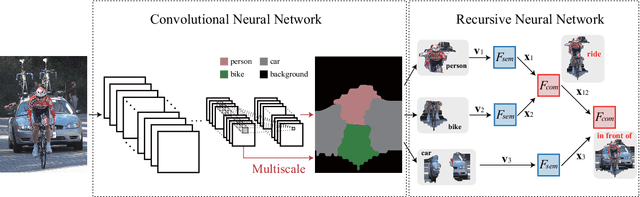

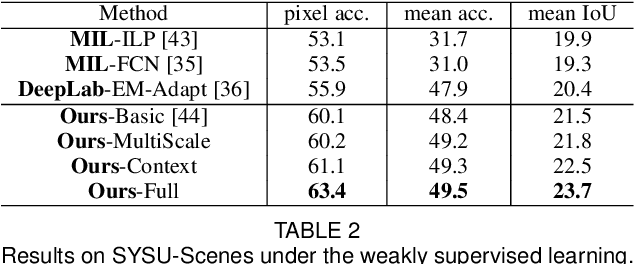

This paper investigates a fundamental problem of scene understanding: how to parse a scene image into a structured configuration (i.e., a semantic object hierarchy with object interaction relations). We propose a deep architecture consisting of two networks: i) a convolutional neural network (CNN) extracting the image representation for pixel-wise object labeling and ii) a recursive neural network (RsNN) discovering the hierarchical object structure and the inter-object relations. Rather than relying on elaborative annotations (e.g., manually labeled semantic maps and relations), we train our deep model in a weakly-supervised learning manner by leveraging the descriptive sentences of the training images. Specifically, we decompose each sentence into a semantic tree consisting of nouns and verb phrases, and apply these tree structures to discover the configurations of the training images. Once these scene configurations are determined, then the parameters of both the CNN and RsNN are updated accordingly by back propagation. The entire model training is accomplished through an Expectation-Maximization method. Extensive experiments show that our model is capable of producing meaningful scene configurations and achieving more favorable scene labeling results on two benchmarks (i.e., PASCAL VOC 2012 and SYSU-Scenes) compared with other state-of-the-art weakly-supervised deep learning methods. In particular, SYSU-Scenes contains more than 5000 scene images with their semantic sentence descriptions, which is created by us for advancing research on scene parsing.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge