GraphIE: A Graph-Based Framework for Information Extraction

Paper and Code

Oct 31, 2018

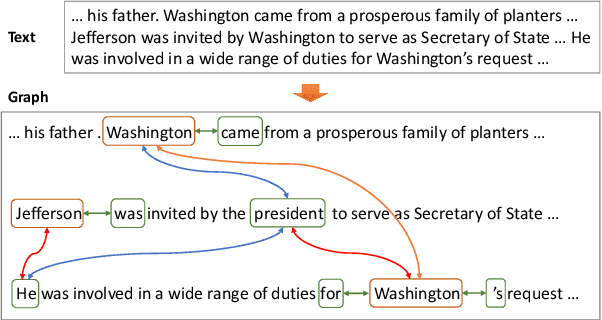

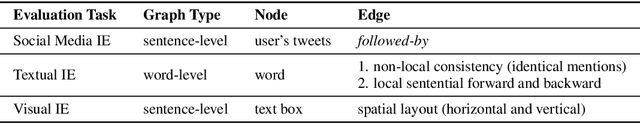

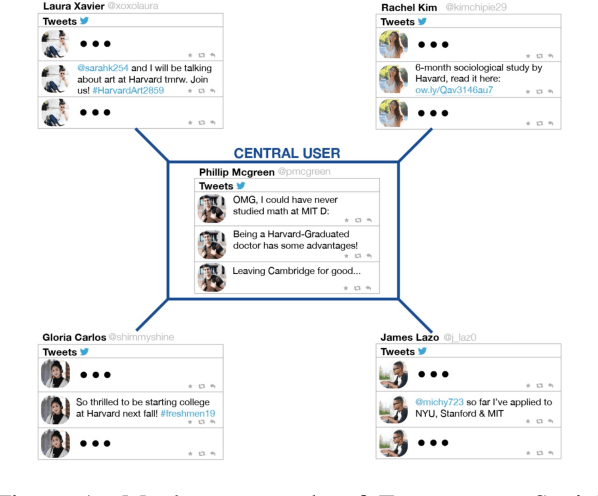

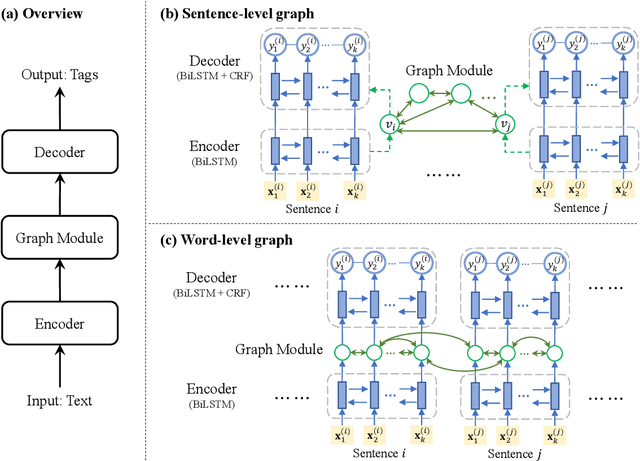

Most modern Information Extraction (IE) systems are implemented as sequential taggers and focus on modelling local dependencies. Non-local and non-sequential context is, however, a valuable source of information to improve predictions. In this paper, we introduce GraphIE, a framework that operates over a graph representing both local and non-local dependencies between textual units (i.e. words or sentences). The algorithm propagates information between connected nodes through graph convolutions and exploits the richer representation to improve word level predictions. The framework is evaluated on three different tasks, namely social media, textual and visual information extraction. Results show that GraphIE outperforms a competitive baseline (BiLSTM+CRF) in all tasks by a significant margin.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge