Good Examples Make A Faster Learner: Simple Demonstration-based Learning for Low-resource NER

Paper and Code

Oct 16, 2021

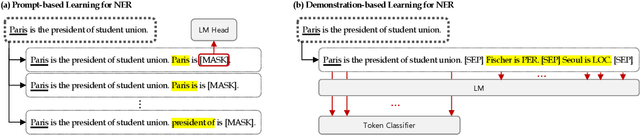

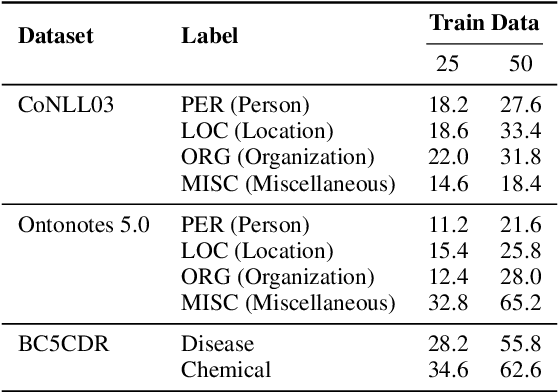

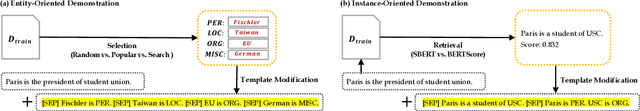

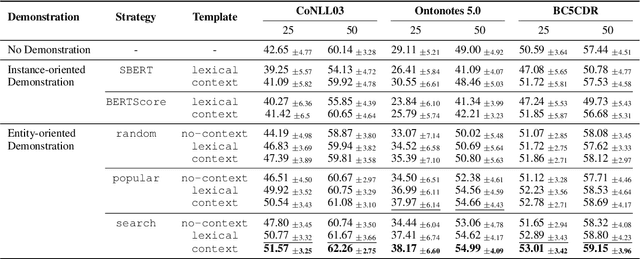

Recent advances in prompt-based learning have shown impressive results on few-shot text classification tasks by using cloze-style language prompts. There have been attempts on prompt-based learning for NER which use manually designed templates to predict entity types. However, these two-step methods may suffer from error propagation (from entity span detection), need to prompt for all possible text spans which is costly, and neglect the interdependency when predicting labels for different spans in a sentence. In this paper, we present a simple demonstration-based learning method for NER, which augments the prompt (learning context) with a few task demonstrations. Such demonstrations help the model learn the task better under low-resource settings and allow for span detection and classification over all tokens jointly. Here, we explore entity-oriented demonstration which selects an appropriate entity example per each entity type, and instance-oriented demonstration which retrieves a similar instance example. Through extensive experiments, we find empirically that showing entity example per each entity type, along with its example sentence, can improve the performance both in in-domain and cross-domain settings by 1-3 F1 score.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge