Generative Feature Replay with Orthogonal Weight Modification for Continual Learning

Paper and Code

May 07, 2020

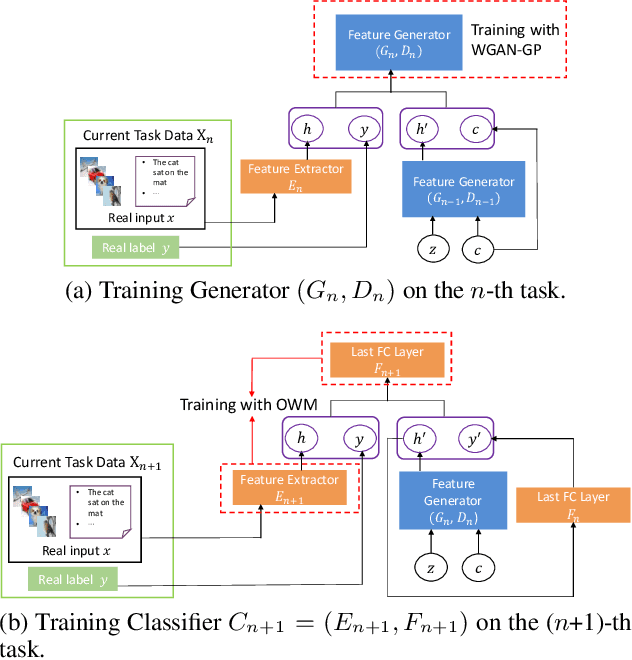

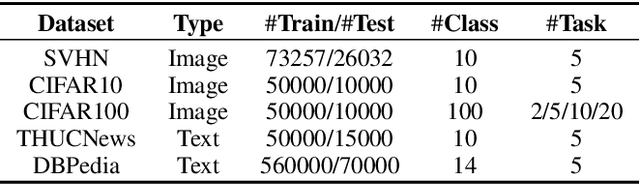

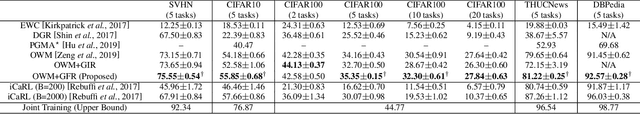

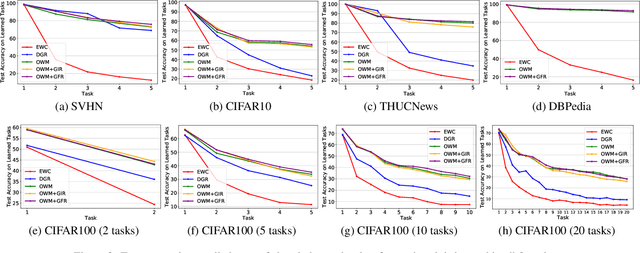

The ability of intelligent agents to learn and remember multiple tasks sequentially is crucial to achieving artificial general intelligence. Many continual learning (CL) methods have been proposed to overcome catastrophic forgetting. Catastrophic forgetting notoriously impedes the sequential learning of neural networks as the data of previous tasks are unavailable. In this paper we focus on class incremental learning, a challenging CL scenario, in which classes of each task are disjoint and task identity is unknown during test. For this scenario, generative replay is an effective strategy which generates and replays pseudo data for previous tasks to alleviate catastrophic forgetting. However, it is not trivial to learn a generative model continually for relatively complex data. Based on recently proposed orthogonal weight modification (OWM) algorithm which can keep previously learned input-output mappings invariant approximately when learning new tasks, we propose to directly generate and replay feature. Empirical results on image and text datasets show our method can improve OWM consistently by a significant margin while conventional generative replay always results in a negative effect. Our method also beats a state-of-the-art generative replay method and is competitive with a strong baseline based on real data storage.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge