Finetuning Large-Scale Pre-trained Language Models for Conversational Recommendation with Knowledge Graph

Paper and Code

Oct 14, 2021

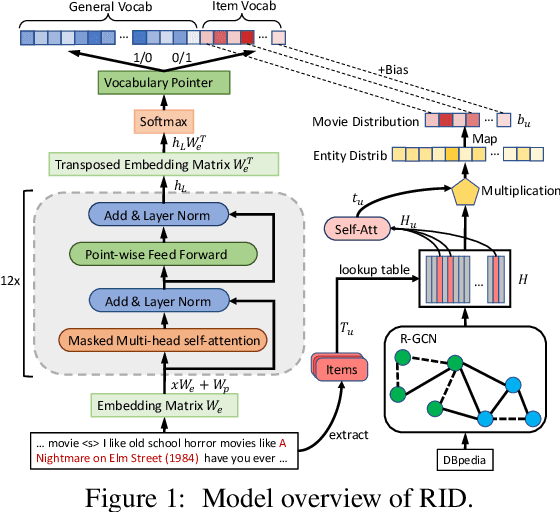

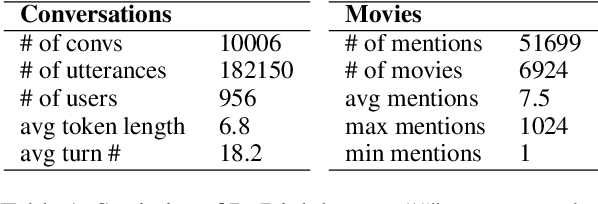

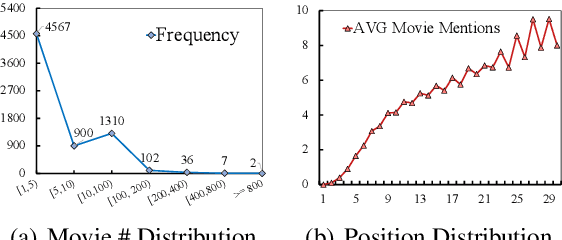

In this paper, we present a pre-trained language model (PLM) based framework called RID for conversational recommender system (CRS). RID finetunes the large-scale PLMs such as DialoGPT, together with a pre-trained Relational Graph Convolutional Network (RGCN) to encode the node representations of an item-oriented knowledge graph. The former aims to generate fluent and diverse dialogue responses based on the strong language generation ability of PLMs, while the latter is to facilitate the item recommendation by learning better node embeddings on the structural knowledge base. To unify two modules of dialogue generation and item recommendation into a PLMs-based framework, we expand the generation vocabulary of PLMs to include an extra item vocabulary, and introduces a vocabulary pointer to control when to recommend target items in the generation process. Extensive experiments on the benchmark dataset ReDial show RID significantly outperforms the state-of-the-art methods on both evaluations of dialogue and recommendation.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge