FedCos: A Scene-adaptive Federated Optimization Enhancement for Performance Improvement

Paper and Code

Apr 07, 2022

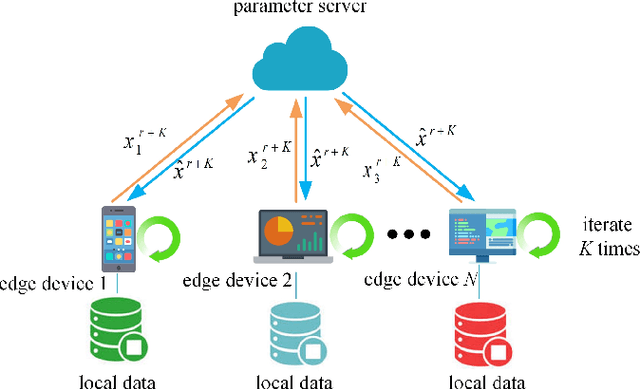

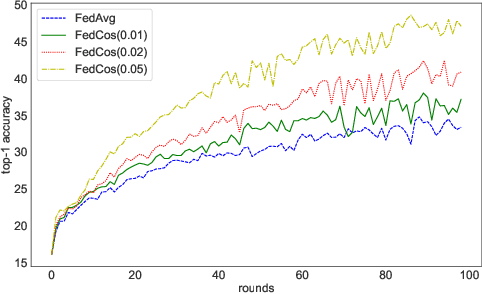

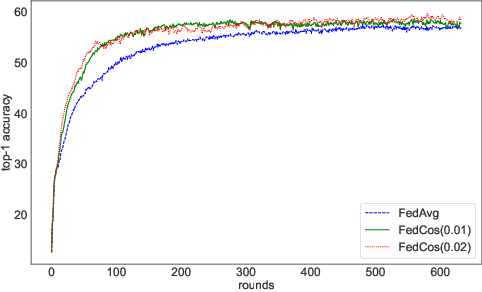

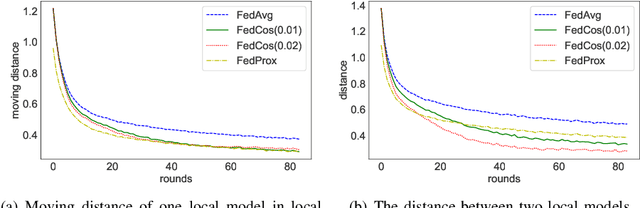

As an emerging technology, federated learning (FL) involves training machine learning models over distributed edge devices, which attracts sustained attention and has been extensively studied. However, the heterogeneity of client data severely degrades the performance of FL compared with that in centralized training. It causes the locally trained models of clients to move in different directions. On the one hand, it slows down or even stalls the global updates, leading to inefficient communication. On the other hand, it enlarges the distances between local models, resulting in an aggregated global model with poor performance. Fortunately, these shortcomings can be mitigated by reducing the angle between the directions that local models move in. Based on this fact, we propose FedCos, which reduces the directional inconsistency of local models by introducing a cosine-similarity penalty. It promotes the local model iterations towards an auxiliary global direction. Moreover, our approach is auto-adapt to various non-IID settings without an elaborate selection of hyperparameters. The experimental results show that FedCos outperforms the well-known baselines and can enhance them under a variety of FL scenes, including varying degrees of data heterogeneity, different number of participants, and cross-silo and cross-device settings. Besides, FedCos improves communication efficiency by 2 to 5 times. With the help of FedCos, multiple FL methods require significantly fewer communication rounds than before to obtain a model with comparable performance.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge