FedCau: A Proactive Stop Policy for Communication and Computation Efficient Federated Learning

Paper and Code

Apr 16, 2022

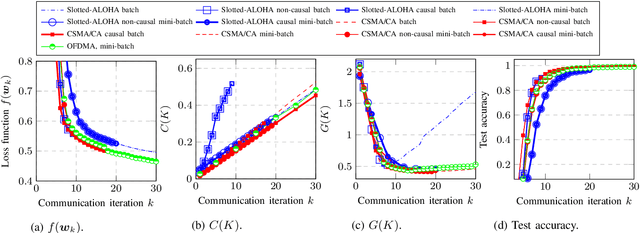

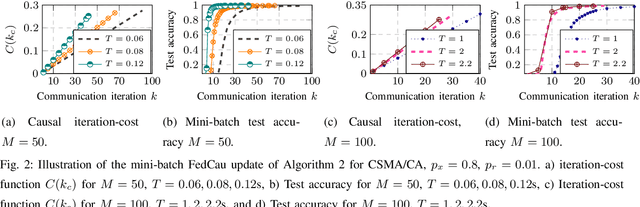

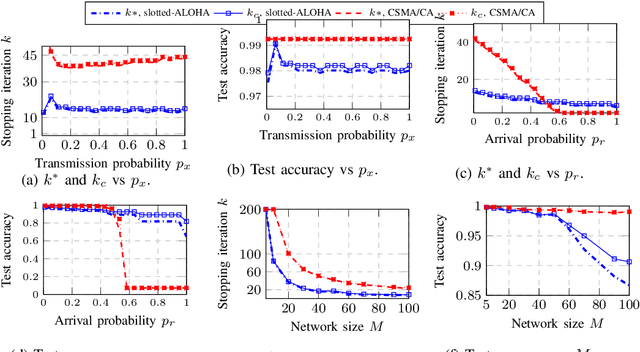

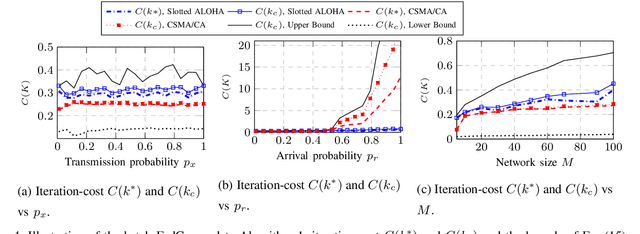

This paper investigates efficient distributed training of a Federated Learning~(FL) model over a wireless network of wireless devices. The communication iterations of the distributed training algorithm may be substantially deteriorated or even blocked by the effects of the devices' background traffic, packet losses, congestion, or latency. We abstract the communication-computation impacts as an `iteration cost' and propose a cost-aware causal FL algorithm~(FedCau) to tackle this problem. We propose an iteration-termination method that trade-offs the training performance and networking costs. We apply our approach when clients use the slotted-ALOHA, the carrier-sense multiple access with collision avoidance~(CSMA/CA), and the orthogonal frequency-division multiple access~(OFDMA) protocols. We show that, given a total cost budget, the training performance degrades as either the background communication traffic or the dimension of the training problem increases. Our results demonstrate the importance of proactively designing optimal cost-efficient stopping criteria to avoid unnecessary communication-computation costs to achieve only a marginal FL training improvement. We validate our method by training and testing FL over the MNIST dataset. Finally, we apply our approach to existing communication efficient FL methods from the literature, achieving further efficiency. We conclude that cost-efficient stopping criteria are essential for the success of practical FL over wireless networks.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge