Fast Graph Attention Networks Using Effective Resistance Based Graph Sparsification

Paper and Code

Jun 17, 2020

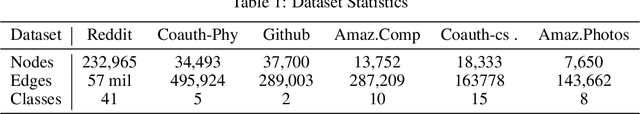

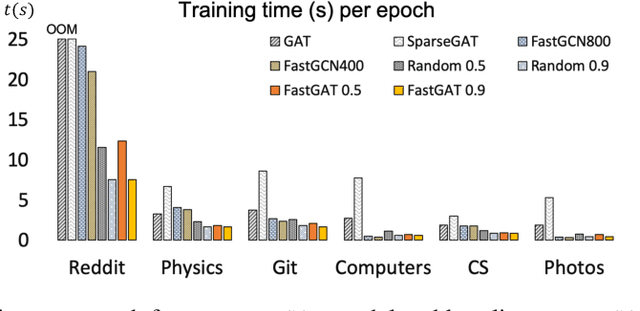

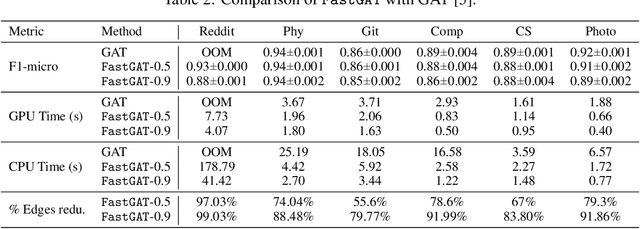

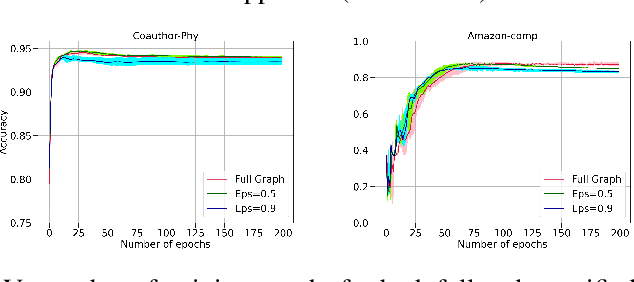

The attention mechanism has demonstrated superior performance for inference over nodes in graph neural networks (GNNs), however, they result in a high computational burden during both training and inference. We propose FastGAT, a method to make attention based GNNs lightweight by using spectral sparsification to generate an optimal pruning of the input graph. This results in a per-epoch time that is almost linear in the number of graph nodes as opposed to quadratic. Further, we provide a re-formulation of a specific attention based GNN, Graph Attention Network (GAT) that interprets it as a graph convolution method using the random walk normalized graph Laplacian. Using this framework, we theoretically prove that spectral sparsification preserves the features computed by the GAT model, thereby justifying our FastGAT algorithm. We experimentally evaluate FastGAT on several large real world graph datasets for node classification tasks, FastGAT can dramatically reduce (up to 10x) the computational time and memory requirements, allowing the usage of attention based GNNs on large graphs.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge