Extreme View Synthesis

Paper and Code

Dec 12, 2018

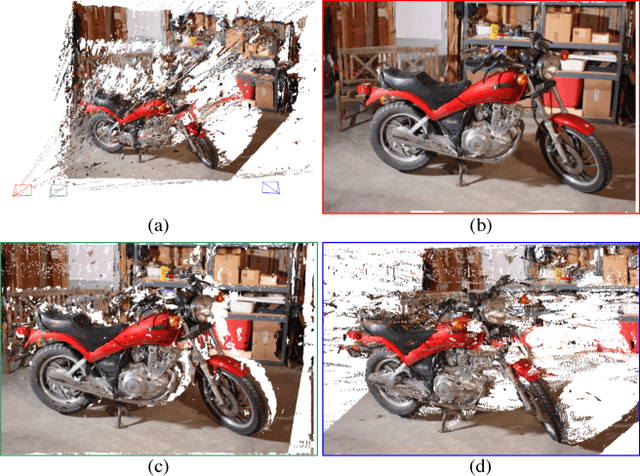

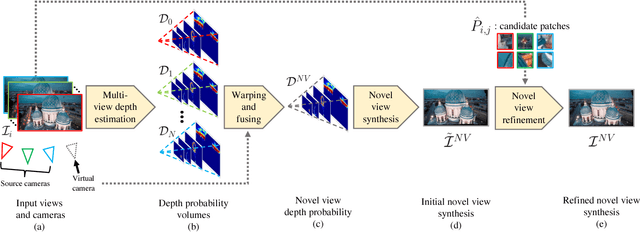

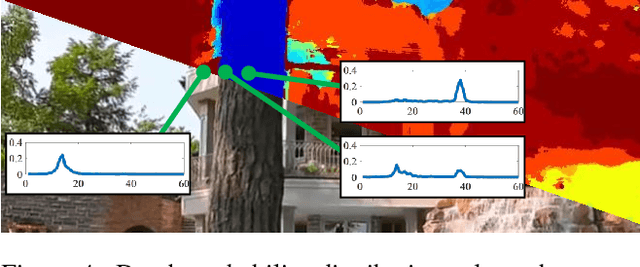

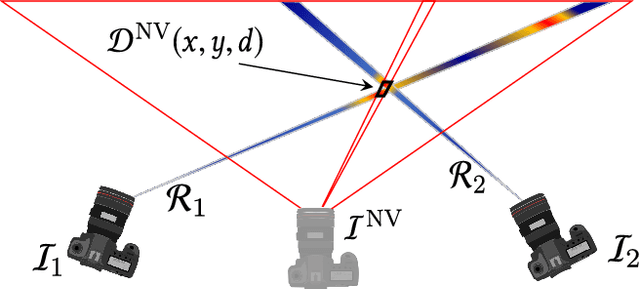

We present Extreme View Synthesis, a solution for novel view extrapolation when the number of input images is small. Occlusions and depth uncertainty, in this context, are two of the most pressing issues, and worsen as the degree of extrapolation increases. State-of-the-art methods approach this problem by leveraging explicit geometric constraints, or learned priors. Our key insight is that only by modeling both depth uncertainty and image priors can the extreme cases be solved. We first generate a depth probability volume for the novel view and synthesize an estimate of the sought image. Then, we use learned priors combined with depth uncertainty, to refine it. Our method is the first to show visually pleasing results for baseline magnifications of up to 30X.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge