Explicitly Learning Topology for Differentiable Neural Architecture Search

Paper and Code

Nov 18, 2020

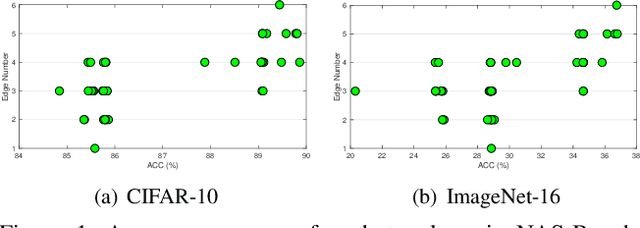

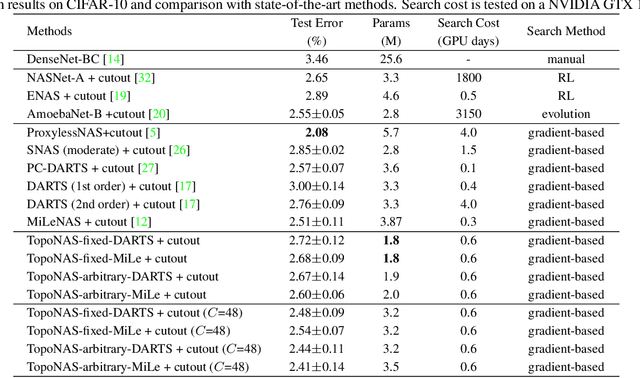

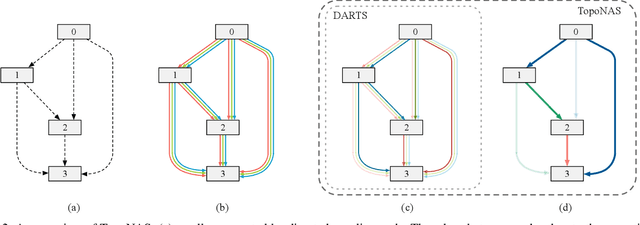

Differentiable neural architecture search (DARTS) has gained much success in discovering more flexible and diverse cell types. Current methods couple the operations and topology during search, and simply derive optimal topology by a hand-craft rule. However, topology also matters for neural architectures since it controls the interactions between features of operations. In this paper, we highlight the topology learning in differentiable NAS, and propose an explicit topology modeling method, named TopoNAS, to directly decouple the operation selection and topology during search. Concretely, we introduce a set of topological variables and a combinatorial probabilistic distribution to explicitly indicate the target topology. Besides, we also leverage a passive-aggressive regularization to suppress invalid topology within supernet. Our introduced topological variables can be jointly learned with operation variables and supernet weights, and apply to various DARTS variants. Extensive experiments on CIFAR-10 and ImageNet validate the effectiveness of our proposed TopoNAS. The results show that TopoNAS does enable to search cells with more diverse and complex topology, and boost the performance significantly. For example, TopoNAS can improve DARTS by 0.16\% accuracy on CIFAR-10 dataset with 40\% parameters reduced or 0.35\% with similar parameters.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge