Experimental Analysis of Reinforcement Learning Techniques for Spectrum Sharing Radar

Paper and Code

Jan 06, 2020

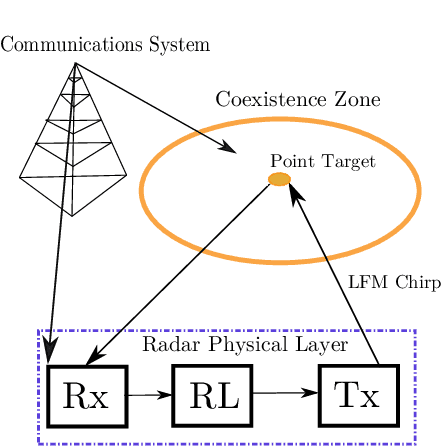

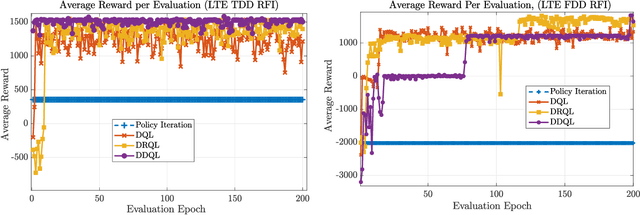

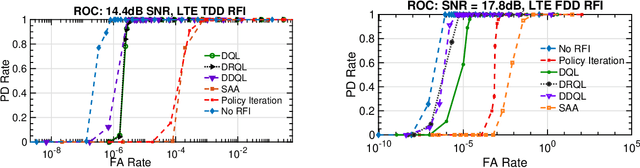

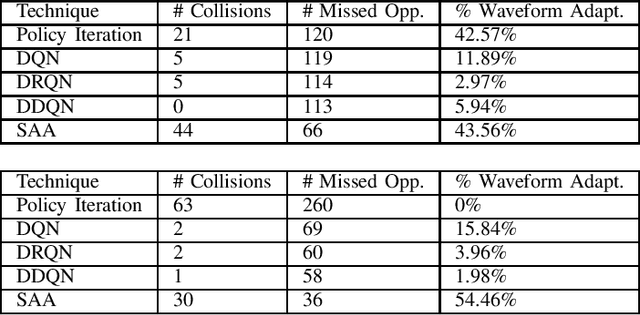

In this work, we first describe a framework for the application of Reinforcement Learning (RL) control to a radar system that operates in a congested spectral setting. We then compare the utility of several RL algorithms through a discussion of experiments performed on Commercial off-the-shelf (COTS) hardware. Each RL technique is evaluated in terms of convergence, radar detection performance achieved in a congested spectral environment, and the ability to share 100MHz spectrum with an uncooperative communications system. We examine policy iteration, which solves an environment posed as a Markov Decision Process (MDP) by directly solving for a stochastic mapping between environmental states and radar waveforms, as well as Deep RL techniques, which utilize a form of Q-Learning to approximate a parameterized function that is used by the radar to select optimal actions. We show that RL techniques are beneficial over a Sense-and-Avoid (SAA) scheme and discuss the conditions under which each approach is most effective.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge