Efficient non-uniform quantizer for quantized neural network targeting reconfigurable hardware

Paper and Code

Nov 27, 2018

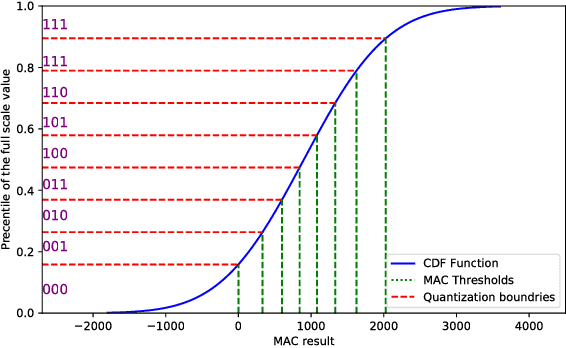

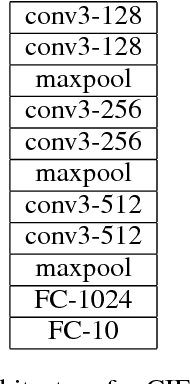

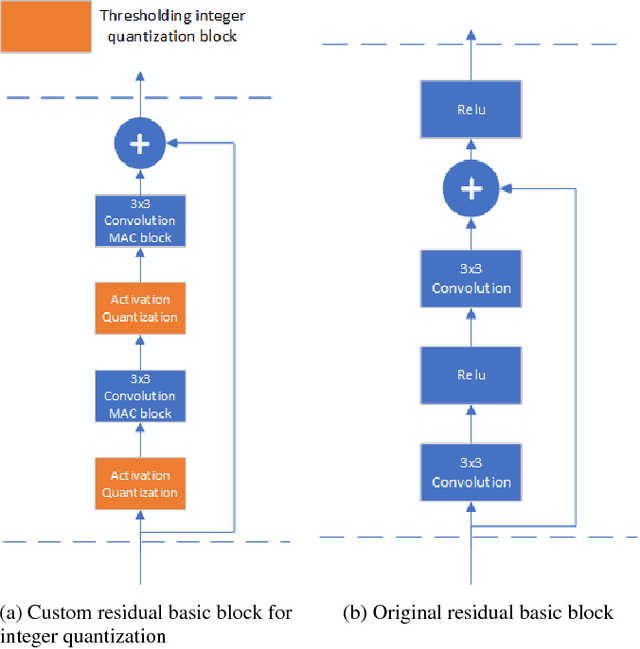

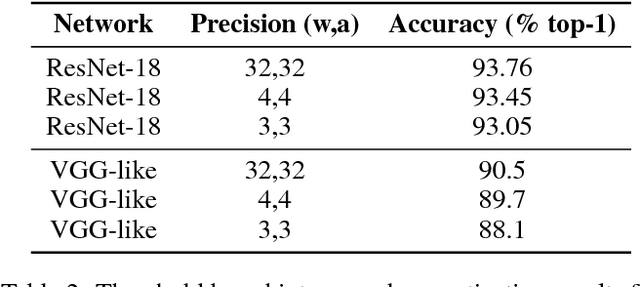

Convolutional Neural Networks (CNN) has become more popular choice for various tasks such as computer vision, speech recognition and natural language processing. Thanks to their large computational capability and throughput, GPUs ,which are not power efficient and therefore does not suit low power systems such as mobile devices, are the most common platform for both training and inferencing tasks. Recent studies has shown that FPGAs can provide a good alternative to GPUs as a CNN accelerator, due to their re-configurable nature, low power and small latency. In order for FPGA-based accelerators outperform GPUs in inference task, both the parameters of the network and the activations must be quantized. While most works use uniform quantizers for both parameters and activations, it is not always the optimal one, and a non-uniform quantizer need to be considered. In this work we introduce a custom hardware-friendly approach to implement non-uniform quantizers. In addition, we use a single scale integer representation of both parameters and activations, for both training and inference. The combined method yields a hardware efficient non-uniform quantizer, fit for real-time applications. We have tested our method on CIFAR-10 and CIFAR-100 image classification datasets with ResNet-18 and VGG-like architectures, and saw little degradation in accuracy.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge