Dynamic Multi-Scale Loss Optimization for Object Detection

Paper and Code

Aug 09, 2021

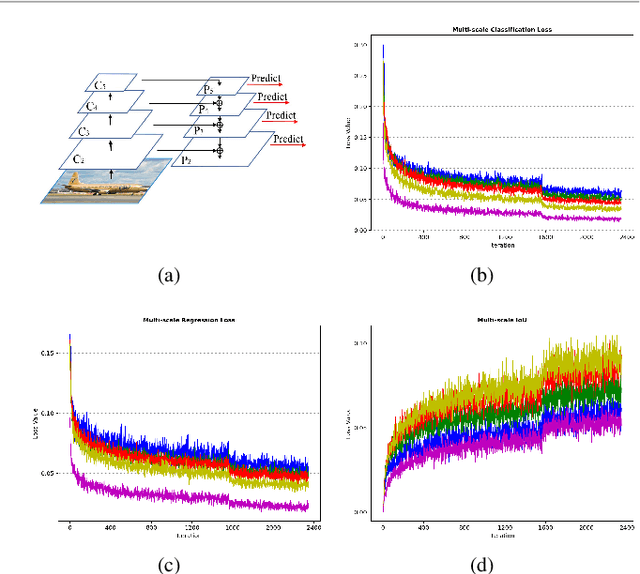

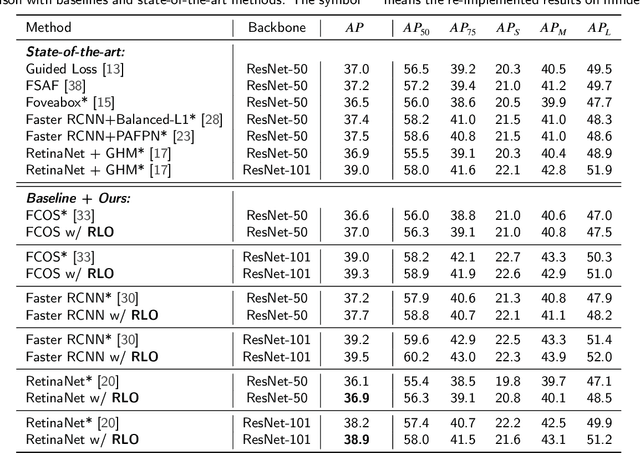

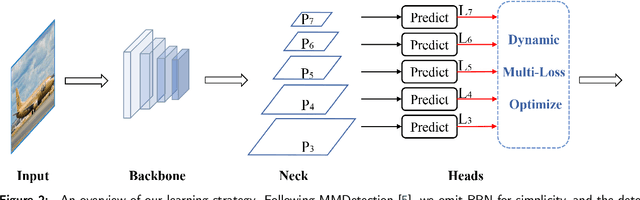

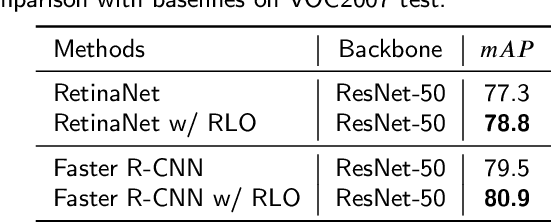

With the continuous improvement of the performance of object detectors via advanced model architectures, imbalance problems in the training process have received more attention. It is a common paradigm in object detection frameworks to perform multi-scale detection. However, each scale is treated equally during training. In this paper, we carefully study the objective imbalance of multi-scale detector training. We argue that the loss in each scale level is neither equally important nor independent. Different from the existing solutions of setting multi-task weights, we dynamically optimize the loss weight of each scale level in the training process. Specifically, we propose an Adaptive Variance Weighting (AVW) to balance multi-scale loss according to the statistical variance. Then we develop a novel Reinforcement Learning Optimization (RLO) to decide the weighting scheme probabilistically during training. The proposed dynamic methods make better utilization of multi-scale training loss without extra computational complexity and learnable parameters for backpropagation. Experiments show that our approaches can consistently boost the performance over various baseline detectors on Pascal VOC and MS COCO benchmark.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge