DPR-CAE: Capsule Autoencoder with Dynamic Part Representation for Image Parsing

Paper and Code

Apr 30, 2021

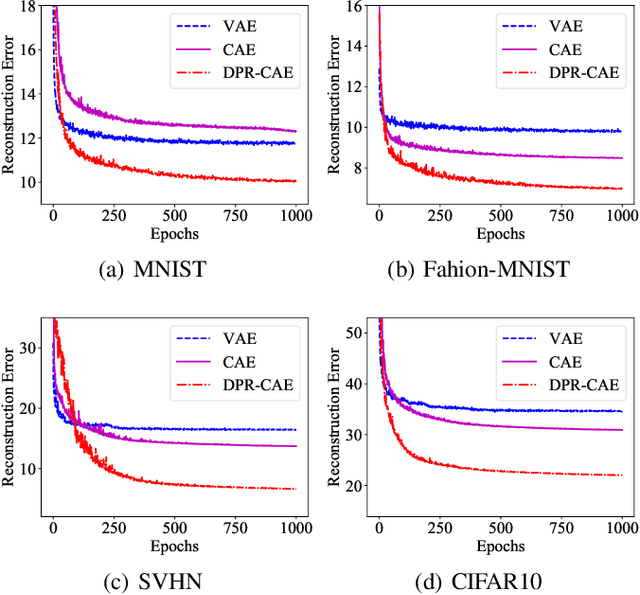

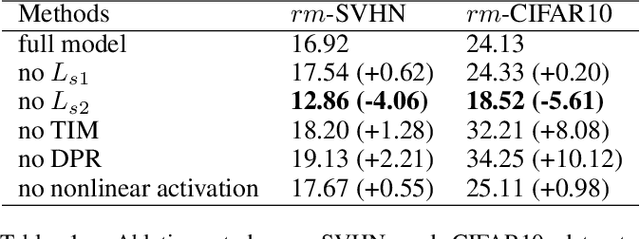

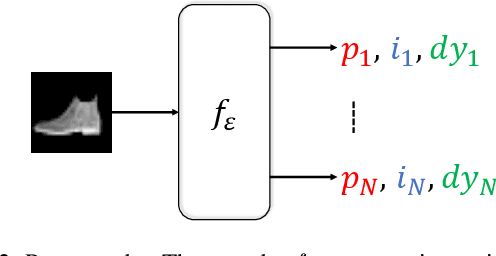

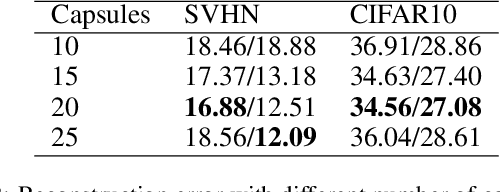

Parsing an image into a hierarchy of objects, parts, and relations is important and also challenging in many computer vision tasks. This paper proposes a simple and effective capsule autoencoder to address this issue, called DPR-CAE. In our approach, the encoder parses the input into a set of part capsules, including pose, intensity, and dynamic vector. The decoder introduces a novel dynamic part representation (DPR) by combining the dynamic vector and a shared template bank. These part representations are then regulated by corresponding capsules to composite the final output in an interpretable way. Besides, an extra translation-invariant module is proposed to avoid directly learning the uncertain scene-part relationship in our DPR-CAE, which makes the resulting method achieves a promising performance gain on $rm$-MNIST and $rm$-Fashion-MNIST. % to model the scene-object relationship DPR-CAE can be easily combined with the existing stacked capsule autoencoder and experimental results show it significantly improves performance in terms of unsupervised object classification. Our code is available in the Appendix.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge