Direct Advantage Estimation

Paper and Code

Sep 13, 2021

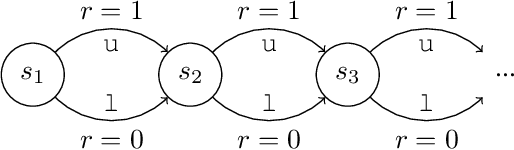

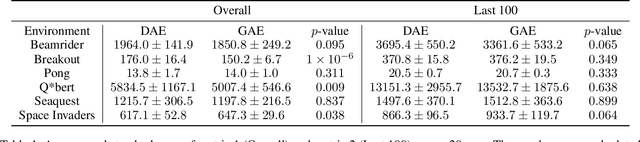

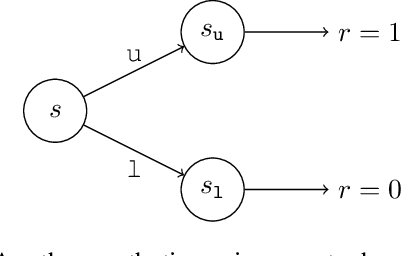

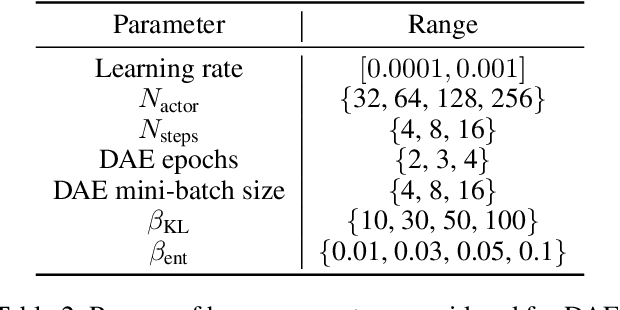

Credit assignment is one of the central problems in reinforcement learning. The predominant approach is to assign credit based on the expected return. However, we show that the expected return may depend on the policy in an undesirable way which could slow down learning. Instead, we borrow ideas from the causality literature and show that the advantage function can be interpreted as causal effects, which share similar properties with causal representations. Based on this insight, we propose the Direct Advantage Estimation (DAE), a novel method that can model the advantage function and estimate it directly from data without requiring the (action-)value function. If desired, value functions can also be seamlessly integrated into DAE and be updated in a similar way to Temporal Difference Learning. The proposed method is easy to implement and can be readily adopted by modern actor-critic methods. We test DAE empirically on the Atari domain and show that it can achieve competitive results with the state-of-the-art method for advantage estimation.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge