Dimension Independence in Unconstrained Private ERM via Adaptive Preconditioning

Paper and Code

Aug 14, 2020

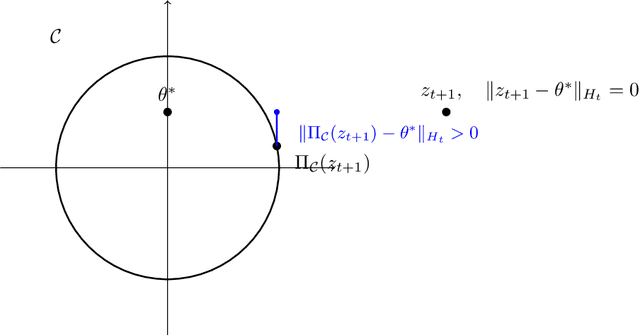

In this paper we revisit the problem of private empirical risk minimziation (ERM) with differential privacy. We show that for unconstrained convex empirical risk minimization if the observed gradients of the objective function along the path of private gradient descent lie in a low-dimensional subspace (smaller than the ambient dimensionality of $p$), then using noisy adaptive preconditioning (a.k.a., noisy Adaptive Gradient Descent (AdaGrad)) we obtain a regret composed of two terms: a constant multiplicative factor of the original AdaGrad regret and an additional regret due to noise. In particular, we show that if the gradients lie in a constant rank subspace, then one can achieve an excess empirical risk of $ \tilde{O}(1/\epsilon n)$, compared to the worst-case achievable bound of $\tilde{O}(\sqrt{p}/\epsilon n)$. While previous works show dimension independent excess empirical risk bounds for the restrictive setting of convex generalized linear problems optimized over unconstrained subspaces, our results operate with general convex functions in unconstrained minimization. Along the way, we do a perturbation analysis of noisy AdaGrad, which may be of independent interest.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge