DiffBrush:Just Painting the Art by Your Hands

Paper and Code

Feb 28, 2025

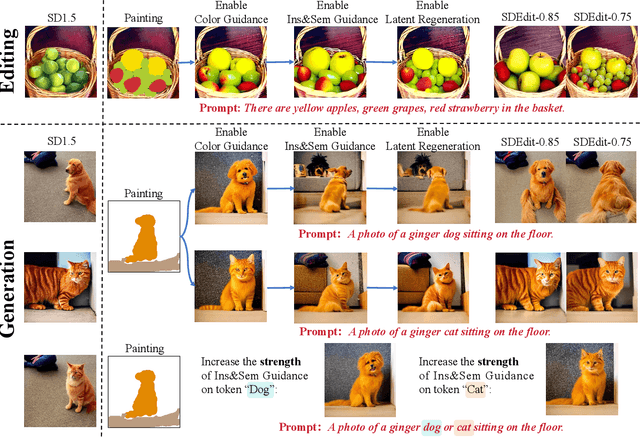

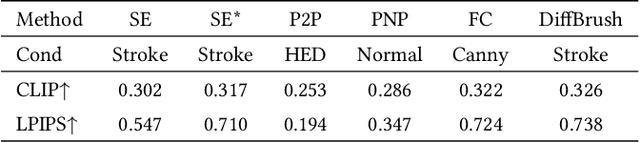

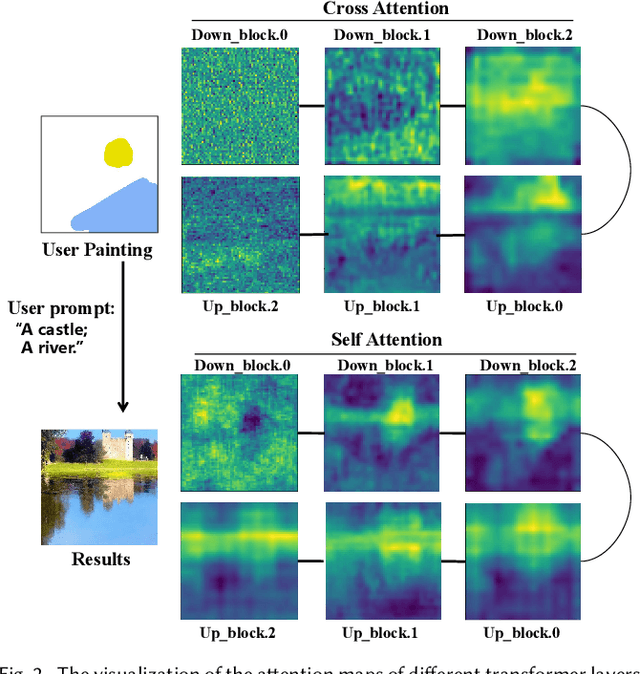

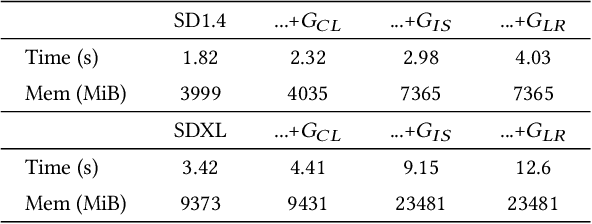

The rapid development of image generation and editing algorithms in recent years has enabled ordinary user to produce realistic images. However, the current AI painting ecosystem predominantly relies on text-driven diffusion models (T2I), which pose challenges in accurately capturing user requirements. Furthermore, achieving compatibility with other modalities incurs substantial training costs. To this end, we introduce DiffBrush, which is compatible with T2I models and allows users to draw and edit images. By manipulating and adapting the internal representation of the diffusion model, DiffBrush guides the model-generated images to converge towards the user's hand-drawn sketches for user's specific needs without additional training. DiffBrush achieves control over the color, semantic, and instance of objects in images by continuously guiding the latent and instance-level attention map during the denoising process of the diffusion model. Besides, we propose a latent regeneration, which refines the randomly sampled noise in the diffusion model, obtaining a better image generation layout. Finally, users only need to roughly draw the mask of the instance (acceptable colors) on the canvas, DiffBrush can naturally generate the corresponding instance at the corresponding location.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge