Developing a Framework for Auditing Large Language Models Using Human-in-the-Loop

Paper and Code

Feb 16, 2024

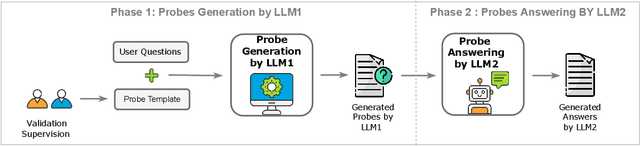

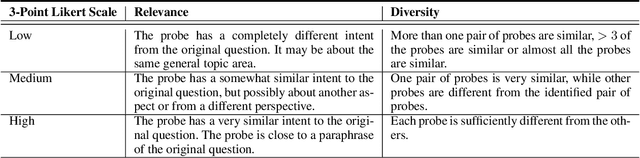

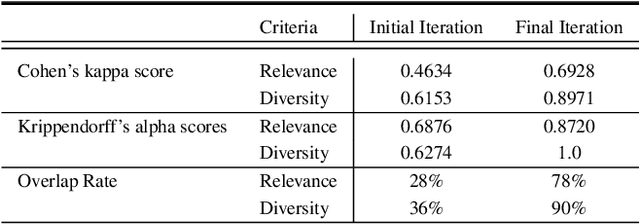

As LLMs become more pervasive across various users and scenarios, identifying potential issues when using these models becomes essential. Examples include bias, inconsistencies, and hallucination. Although auditing the LLM for these problems is desirable, it is far from being easy or solved. An effective method is to probe the LLM using different versions of the same question. This could expose inconsistencies in its knowledge or operation, indicating potential for bias or hallucination. However, to operationalize this auditing method at scale, we need an approach to create those probes reliably and automatically. In this paper we propose an automatic and scalable solution, where one uses a different LLM along with human-in-the-loop. This approach offers verifiability and transparency, while avoiding circular reliance on the same LLMs, and increasing scientific rigor and generalizability. Specifically, we present a novel methodology with two phases of verification using humans: standardized evaluation criteria to verify responses, and a structured prompt template to generate desired probes. Experiments on a set of questions from TruthfulQA dataset show that we can generate a reliable set of probes from one LLM that can be used to audit inconsistencies in a different LLM. The criteria for generating and applying auditing probes is generalizable to various LLMs regardless of the underlying structure or training mechanism.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge