Deep Proximal Learning for High-Resolution Plane Wave Compounding

Paper and Code

Dec 23, 2021

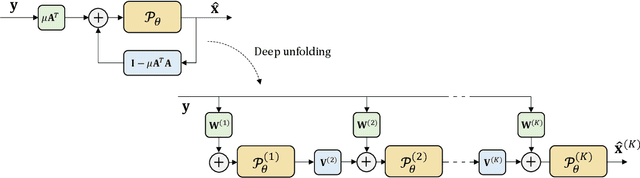

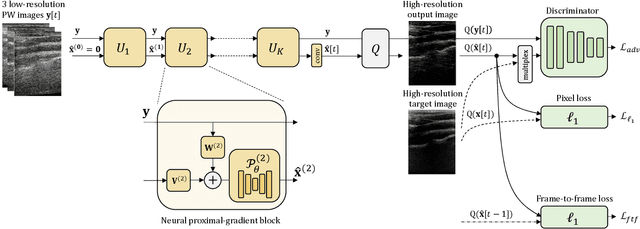

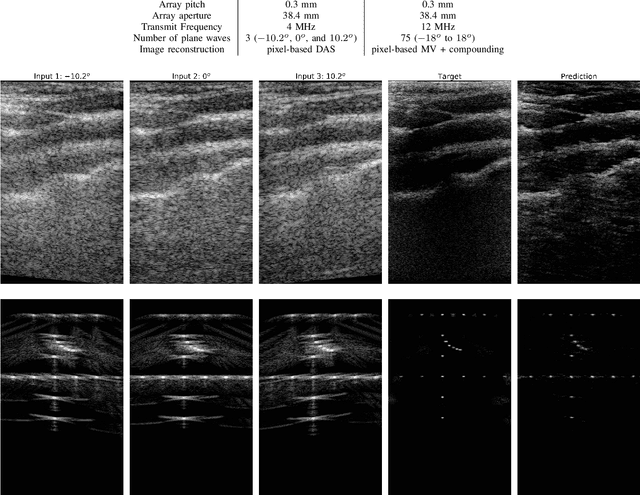

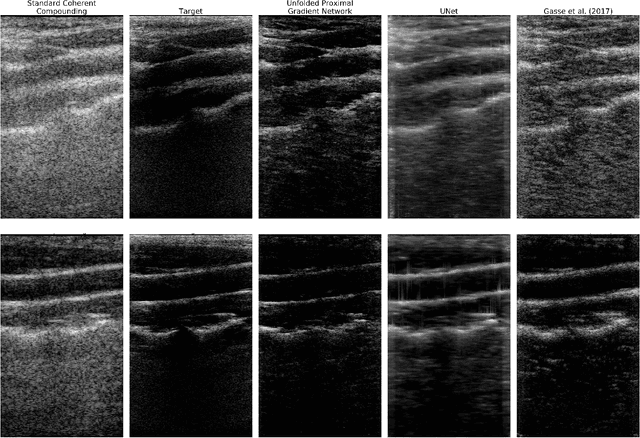

Plane Wave imaging enables many applications that require high frame rates, including localisation microscopy, shear wave elastography, and ultra-sensitive Doppler. To alleviate the degradation of image quality with respect to conventional focused acquisition, typically, multiple acquisitions from distinctly steered plane waves are coherently (i.e. after time-of-flight correction) compounded into a single image. This poses a trade-off between image quality and achievable frame-rate. To that end, we propose a new deep learning approach, derived by formulating plane wave compounding as a linear inverse problem, that attains high resolution, high-contrast images from just 3 plane wave transmissions. Our solution unfolds the iterations of a proximal gradient descent algorithm as a deep network, thereby directly exploiting the physics-based generative acquisition model into the neural network design. We train our network in a greedy manner, i.e. layer-by-layer, using a combination of pixel, temporal, and distribution (adversarial) losses to achieve both perceptual fidelity and data consistency. Through the strong model-based inductive bias, the proposed architecture outperforms several standard benchmark architectures in terms of image quality, with a low computational and memory footprint.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge