Dead Pixel Test Using Effective Receptive Field

Paper and Code

Aug 31, 2021

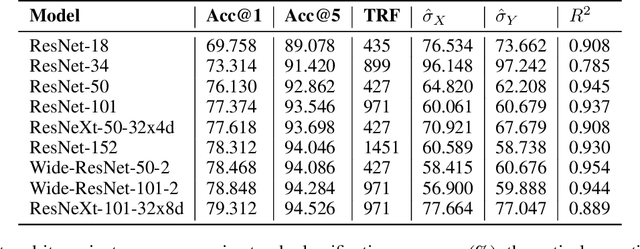

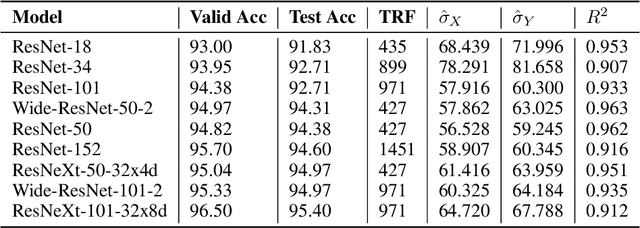

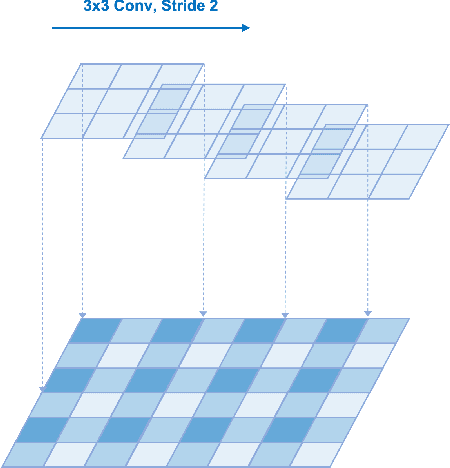

Deep neural networks have been used in various fields, but their internal behavior is not well known. In this study, we discuss two counterintuitive behaviors of convolutional neural networks (CNNs). First, we evaluated the size of the receptive field. Previous studies have attempted to increase or control the size of the receptive field. However, we observed that the size of the receptive field does not describe the classification accuracy. The size of the receptive field would be inappropriate for representing superiority in performance because it reflects only depth or kernel size and does not reflect other factors such as width or cardinality. Second, using the effective receptive field, we examined the pixels contributing to the output. Intuitively, each pixel is expected to equally contribute to the final output. However, we found that there exist pixels in a partially dead state with little contribution to the output. We reveal that the reason for this lies in the architecture of CNN and discuss solutions to reduce the phenomenon. Interestingly, for general classification tasks, the existence of dead pixels improves the training of CNNs. However, in a task that captures small perturbation, dead pixels degrade the performance. Therefore, the existence of these dead pixels should be understood and considered in practical applications of CNN.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge