Data-Driven Pixel Control: Challenges and Prospects

Paper and Code

Aug 08, 2024

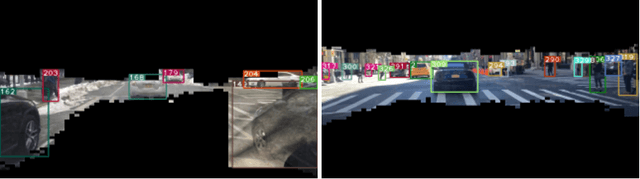

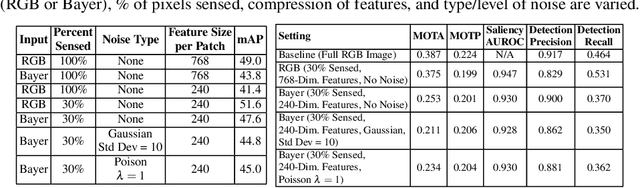

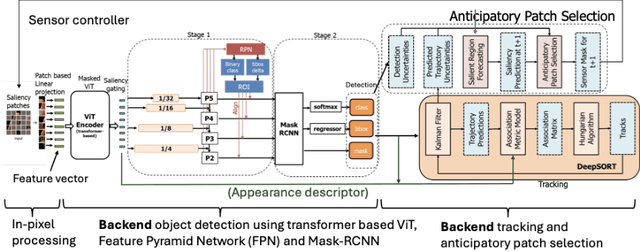

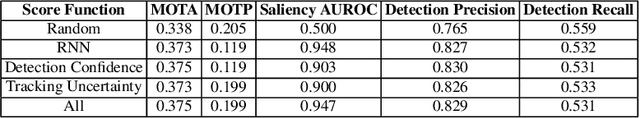

Recent advancements in sensors have led to high resolution and high data throughput at the pixel level. Simultaneously, the adoption of increasingly large (deep) neural networks (NNs) has lead to significant progress in computer vision. Currently, visual intelligence comes at increasingly high computational complexity, energy, and latency. We study a data-driven system that combines dynamic sensing at the pixel level with computer vision analytics at the video level and propose a feedback control loop to minimize data movement between the sensor front-end and computational back-end without compromising detection and tracking precision. Our contributions are threefold: (1) We introduce anticipatory attention and show that it leads to high precision prediction with sparse activation of pixels; (2) Leveraging the feedback control, we show that the dimensionality of learned feature vectors can be significantly reduced with increased sparsity; and (3) We emulate analog design choices (such as varying RGB or Bayer pixel format and analog noise) and study their impact on the key metrics of the data-driven system. Comparative analysis with traditional pixel and deep learning models shows significant performance enhancements. Our system achieves a 10X reduction in bandwidth and a 15-30X improvement in Energy-Delay Product (EDP) when activating only 30% of pixels, with a minor reduction in object detection and tracking precision. Based on analog emulation, our system can achieve a throughput of 205 megapixels/sec (MP/s) with a power consumption of only 110 mW per MP, i.e., a theoretical improvement of ~30X in EDP.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge