Cooperative Learning of Zero-Shot Machine Reading Comprehension

Paper and Code

Mar 22, 2021

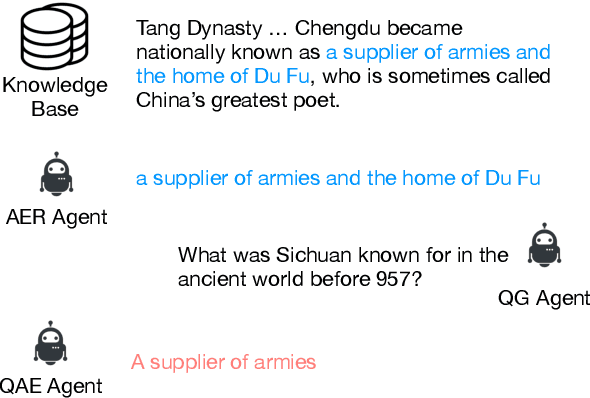

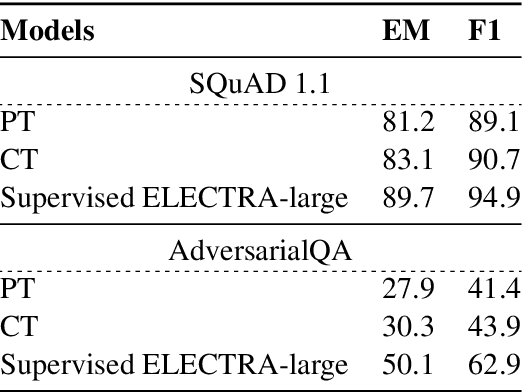

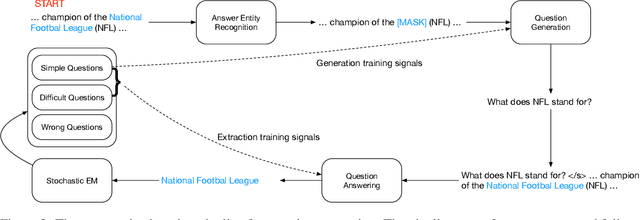

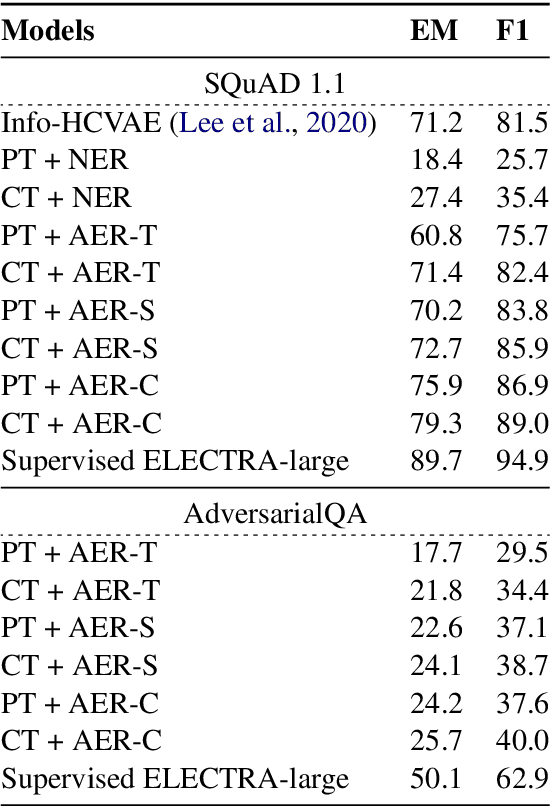

Pretrained language models have significantly improved the performance of down-stream language understanding tasks, including extractive question answering, by providing high-quality contextualized word embeddings. However, learning question answering models still need large-scaled data annotation in specific domains. In this work, we propose a cooperative, self-play learning framework, REGEX, for question generation and answering. REGEX is built upon a masked answer extraction task with an interactive learning environment containing an answer entity REcognizer, a question Generator, and an answer EXtractor. Given a passage with a masked entity, the generator generates a question around the entity, and the extractor is trained to extract the masked entity with the generated question and raw texts. The framework allows the training of question generation and answering models on any text corpora without annotation. We further leverage a reinforcement learning technique to reward generating high-quality questions and to improve the answer extraction model's performance. Experiment results show that REGEX outperforms the state-of-the-art (SOTA) pretrained language models and zero-shot approaches on standard question-answering benchmarks, and yields the new SOTA performance under the zero-shot setting.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge