Context-aware Proposal Network for Temporal Action Detection

Paper and Code

Jun 18, 2022

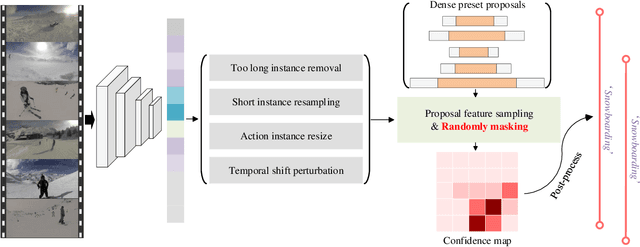

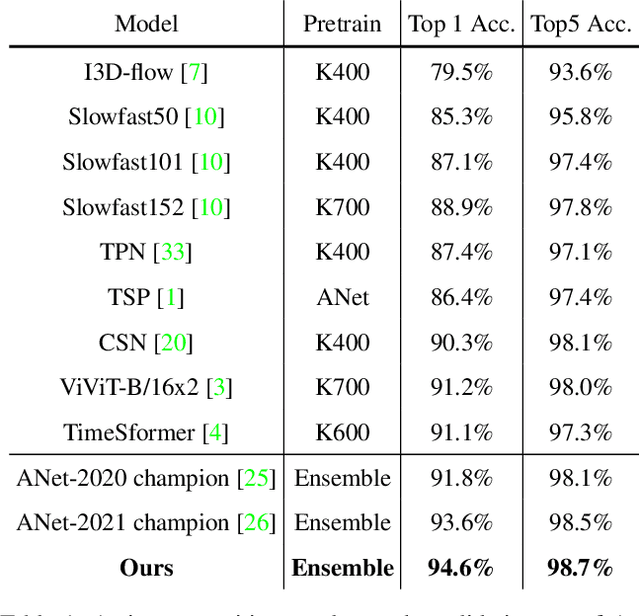

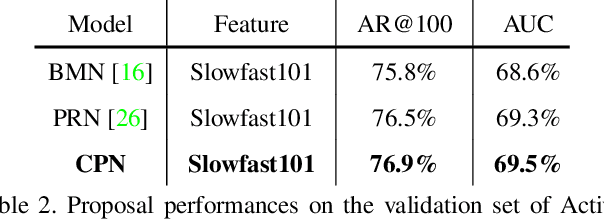

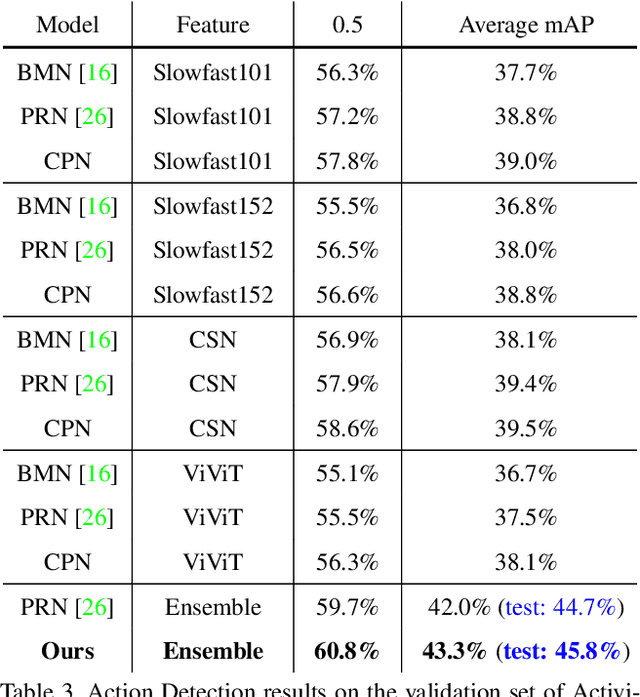

This technical report presents our first place winning solution for temporal action detection task in CVPR-2022 AcitivityNet Challenge. The task aims to localize temporal boundaries of action instances with specific classes in long untrimmed videos. Recent mainstream attempts are based on dense boundary matchings and enumerate all possible combinations to produce proposals. We argue that the generated proposals contain rich contextual information, which may benefits detection confidence prediction. To this end, our method mainly consists of the following three steps: 1) action classification and feature extraction by Slowfast, CSN, TimeSformer, TSP, I3D-flow, VGGish-audio, TPN and ViViT; 2) proposal generation. Our proposed Context-aware Proposal Network (CPN) builds on top of BMN, GTAD and PRN to aggregate contextual information by randomly masking some proposal features. 3) action detection. The final detection prediction is calculated by assigning the proposals with corresponding video-level classifcation results. Finally, we ensemble the results under different feature combination settings and achieve 45.8% performance on the test set, which improves the champion result in CVPR-2021 ActivityNet Challenge by 1.1% in terms of average mAP.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge