Conservative Policy Construction Using Variational Autoencoders for Logged Data with Missing Values

Paper and Code

Sep 08, 2021

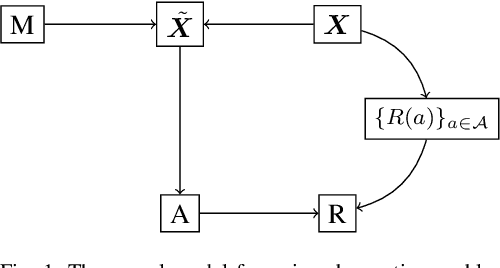

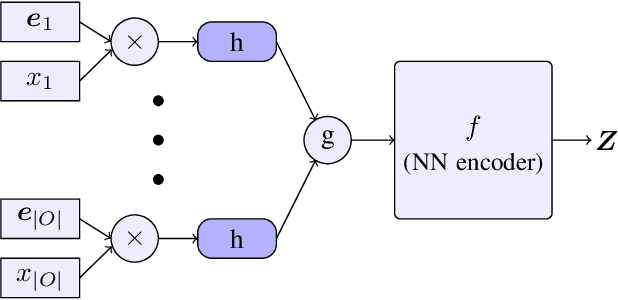

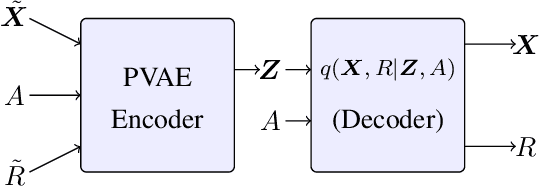

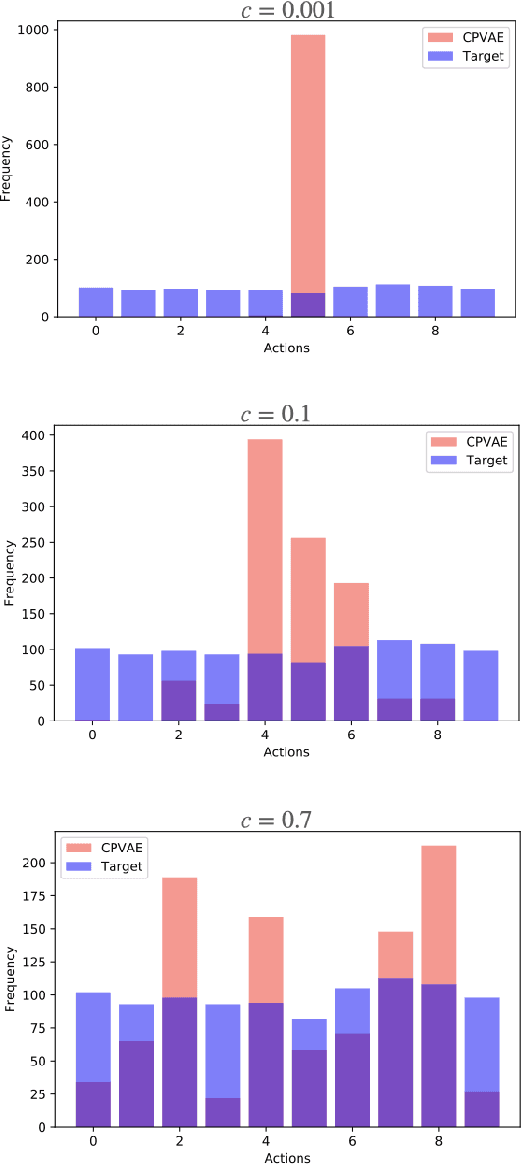

In high-stakes applications of data-driven decision making like healthcare, it is of paramount importance to learn a policy that maximizes the reward while avoiding potentially dangerous actions when there is uncertainty. There are two main challenges usually associated with this problem. Firstly, learning through online exploration is not possible due to the critical nature of such applications. Therefore, we need to resort to observational datasets with no counterfactuals. Secondly, such datasets are usually imperfect, additionally cursed with missing values in the attributes of features. In this paper, we consider the problem of constructing personalized policies using logged data when there are missing values in the attributes of features in both training and test data. The goal is to recommend an action (treatment) when $\Xt$, a degraded version of $\Xb$ with missing values, is observed. We consider three strategies for dealing with missingness. In particular, we introduce the \textit{conservative strategy} where the policy is designed to safely handle the uncertainty due to missingness. In order to implement this strategy we need to estimate posterior distribution $p(\Xb|\Xt)$, we use variational autoencoder to achieve this. In particular, our method is based on partial variational autoencoders (PVAE) which are designed to capture the underlying structure of features with missing values.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge