Conformal Isometry of Lie Group Representation in Recurrent Network of Grid Cells

Paper and Code

Oct 06, 2022

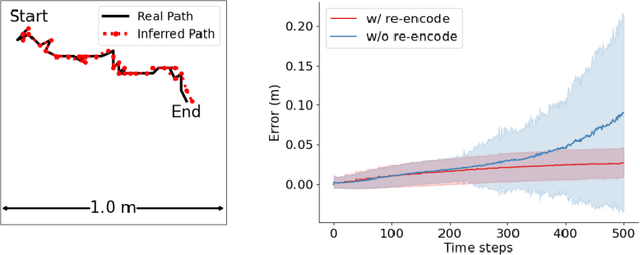

The activity of the grid cell population in the medial entorhinal cortex (MEC) of the brain forms a vector representation of the self-position of the animal. Recurrent neural networks have been developed to explain the properties of the grid cells by transforming the vector based on the input velocity, so that the grid cells can perform path integration. In this paper, we investigate the algebraic, geometric, and topological properties of grid cells using recurrent network models. Algebraically, we study the Lie group and Lie algebra of the recurrent transformation as a representation of self-motion. Geometrically, we study the conformal isometry of the Lie group representation of the recurrent network where the local displacement of the vector in the neural space is proportional to the local displacement of the agent in the 2D physical space. We then focus on a simple non-linear recurrent model that underlies the continuous attractor neural networks of grid cells. Our numerical experiments show that conformal isometry leads to hexagon periodic patterns of the response maps of grid cells and our model is capable of accurate path integration.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge