Class-Distribution-Aware Pseudo Labeling for Semi-Supervised Multi-Label Learning

Paper and Code

May 04, 2023

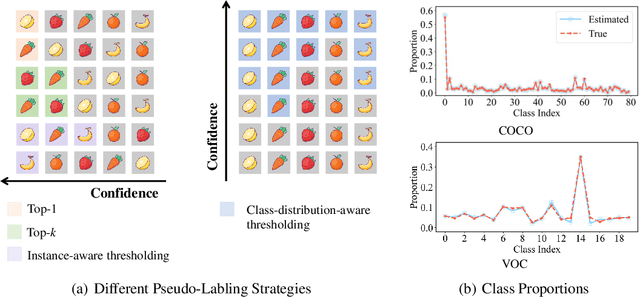

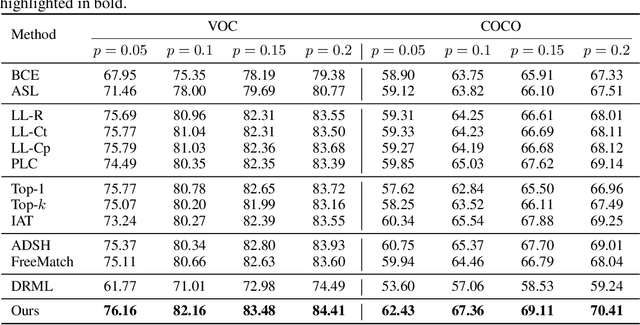

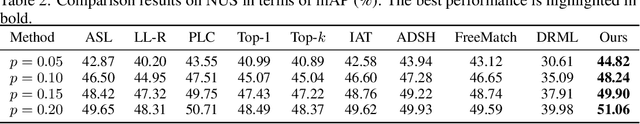

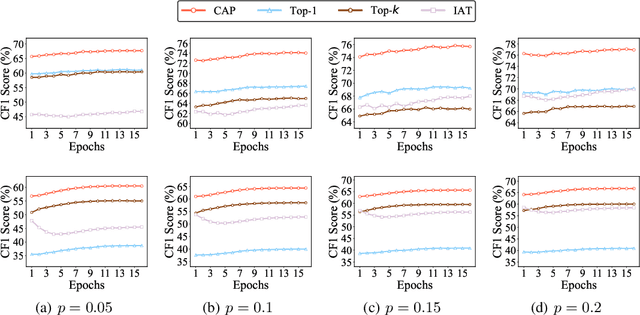

Pseudo labeling is a popular and effective method to leverage the information of unlabeled data. Conventional instance-aware pseudo labeling methods often assign each unlabeled instance with a pseudo label based on its predicted probabilities. However, due to the unknown number of true labels, these methods cannot generalize well to semi-supervised multi-label learning (SSMLL) scenarios, since they would suffer from the risk of either introducing false positive labels or neglecting true positive ones. In this paper, we propose to solve the SSMLL problems by performing Class-distribution-Aware Pseudo labeling (CAP), which encourages the class distribution of pseudo labels to approximate the true one. Specifically, we design a regularized learning framework consisting of the class-aware thresholds to control the number of pseudo labels for each class. Given that the labeled and unlabeled examples are sampled according to the same distribution, we determine the thresholds by exploiting the empirical class distribution, which can be treated as a tight approximation to the true one. Theoretically, we show that the generalization performance of the proposed method is dependent on the pseudo labeling error, which can be significantly reduced by the CAP strategy. Extensive experimental results on multiple benchmark datasets validate that CAP can effectively solve the SSMLL problems.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge