CircConv: A Structured Convolution with Low Complexity

Paper and Code

Feb 28, 2019

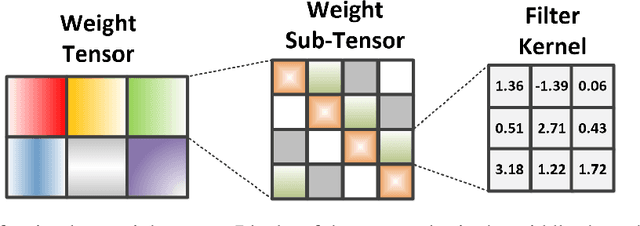

Deep neural networks (DNNs), especially deep convolutional neural networks (CNNs), have emerged as the powerful technique in various machine learning applications. However, the large model sizes of DNNs yield high demands on computation resource and weight storage, thereby limiting the practical deployment of DNNs. To overcome these limitations, this paper proposes to impose the circulant structure to the construction of convolutional layers, and hence leads to circulant convolutional layers (CircConvs) and circulant CNNs. The circulant structure and models can be either trained from scratch or re-trained from a pre-trained non-circulant model, thereby making it very flexible for different training environments. Through extensive experiments, such strong structure-imposing approach is proved to be able to substantially reduce the number of parameters of convolutional layers and enable significant saving of computational cost by using fast multiplication of the circulant tensor.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge