Chipmunk: A Systolically Scalable 0.9 mm${}^2$, 3.08 Gop/s/mW @ 1.2 mW Accelerator for Near-Sensor Recurrent Neural Network Inference

Paper and Code

Feb 20, 2018

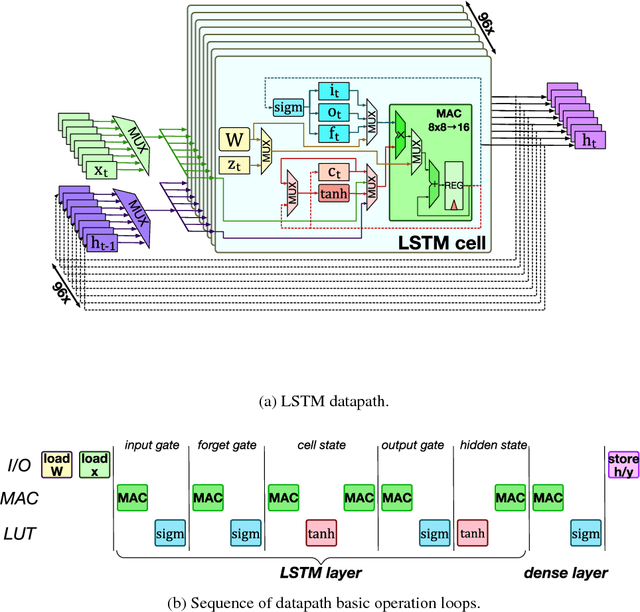

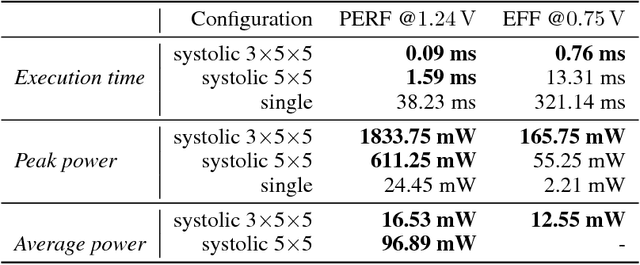

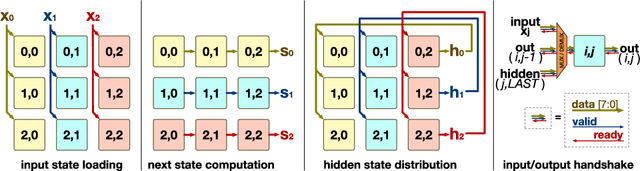

Recurrent neural networks (RNNs) are state-of-the-art in voice awareness/understanding and speech recognition. On-device computation of RNNs on low-power mobile and wearable devices would be key to applications such as zero-latency voice-based human-machine interfaces. Here we present Chipmunk, a small (<1 mm${}^2$) hardware accelerator for Long-Short Term Memory RNNs in UMC 65 nm technology capable to operate at a measured peak efficiency up to 3.08 Gop/s/mW at 1.24 mW peak power. To implement big RNN models without incurring in huge memory transfer overhead, multiple Chipmunk engines can cooperate to form a single systolic array. In this way, the Chipmunk architecture in a 75 tiles configuration can achieve real-time phoneme extraction on a demanding RNN topology proposed by Graves et al., consuming less than 13 mW of average power.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge