Causality is all you need

Paper and Code

Nov 21, 2023

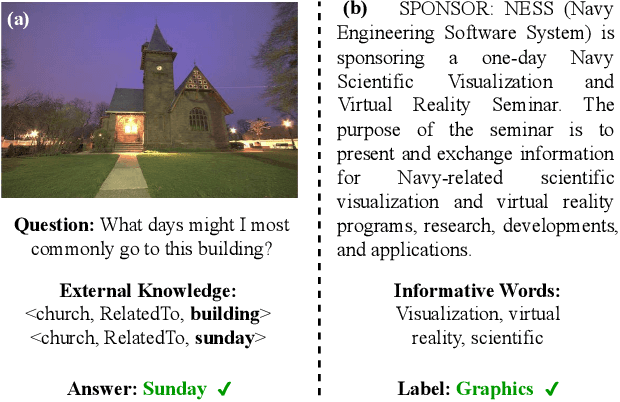

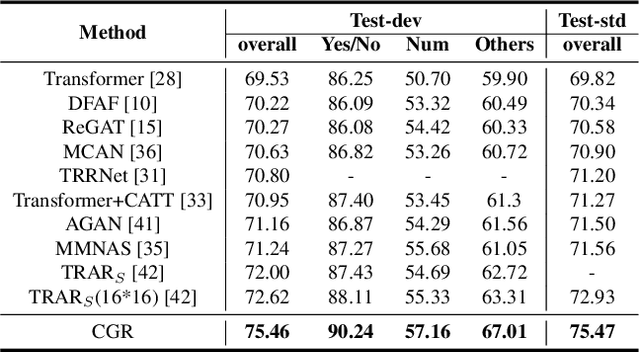

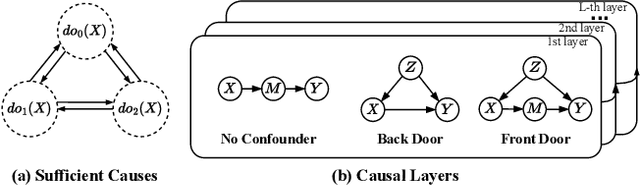

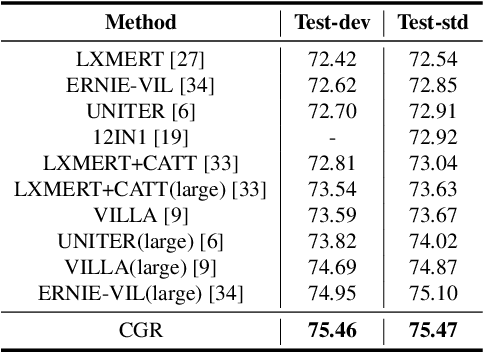

In the fundamental statistics course, students are taught to remember the well-known saying: "Correlation is not Causation". Till now, statistics (i.e., correlation) have developed various successful frameworks, such as Transformer and Pre-training large-scale models, which have stacked multiple parallel self-attention blocks to imitate a wide range of tasks. However, in the causation community, how to build an integrated causal framework still remains an untouched domain despite its excellent intervention capabilities. In this paper, we propose the Causal Graph Routing (CGR) framework, an integrated causal scheme relying entirely on the intervention mechanisms to reveal the cause-effect forces hidden in data. Specifically, CGR is composed of a stack of causal layers. Each layer includes a set of parallel deconfounding blocks from different causal graphs. We combine these blocks via the concept of the proposed sufficient cause, which allows the model to dynamically select the suitable deconfounding methods in each layer. CGR is implemented as the stacked networks, integrating no confounder, back-door adjustment, front-door adjustment, and probability of sufficient cause. We evaluate this framework on two classical tasks of CV and NLP. Experiments show CGR can surpass the current state-of-the-art methods on both Visual Question Answer and Long Document Classification tasks. In particular, CGR has great potential in building the "causal" pre-training large-scale model that effectively generalizes to diverse tasks. It will improve the machines' comprehension of causal relationships within a broader semantic space.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge