Buildings Detection in VHR SAR Images Using Fully Convolution Neural Networks

Paper and Code

Aug 14, 2018

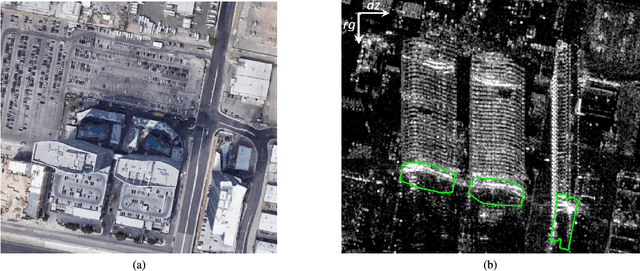

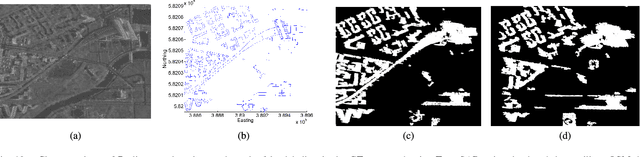

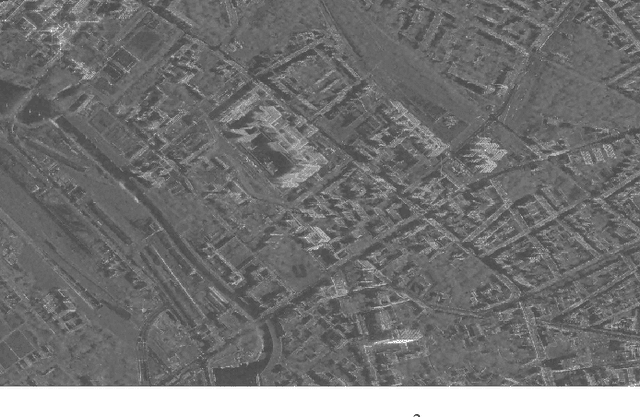

This paper addresses the highly challenging problem of automatically detecting man-made structures especially buildings in very high resolution (VHR) synthetic aperture radar (SAR) images. In this context, the paper has two major contributions: Firstly, it presents a novel and generic workflow that initially classifies the spaceborne TomoSAR point clouds $ - $ generated by processing VHR SAR image stacks using advanced interferometric techniques known as SAR tomography (TomoSAR) $ - $ into buildings and non-buildings with the aid of auxiliary information (i.e., either using openly available 2-D building footprints or adopting an optical image classification scheme) and later back project the extracted building points onto the SAR imaging coordinates to produce automatic large-scale benchmark labelled (buildings/non-buildings) SAR datasets. Secondly, these labelled datasets (i.e., building masks) have been utilized to construct and train the state-of-the-art deep Fully Convolution Neural Networks with an additional Conditional Random Field represented as a Recurrent Neural Network to detect building regions in a single VHR SAR image. Such a cascaded formation has been successfully employed in computer vision and remote sensing fields for optical image classification but, to our knowledge, has not been applied to SAR images. The results of the building detection are illustrated and validated over a TerraSAR-X VHR spotlight SAR image covering approximately 39 km$ ^2 $ $ - $ almost the whole city of Berlin $ - $ with mean pixel accuracies of around 93.84%

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge