Bayesian Layers: A Module for Neural Network Uncertainty

Paper and Code

Dec 11, 2018

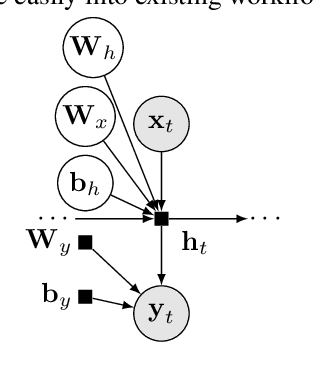

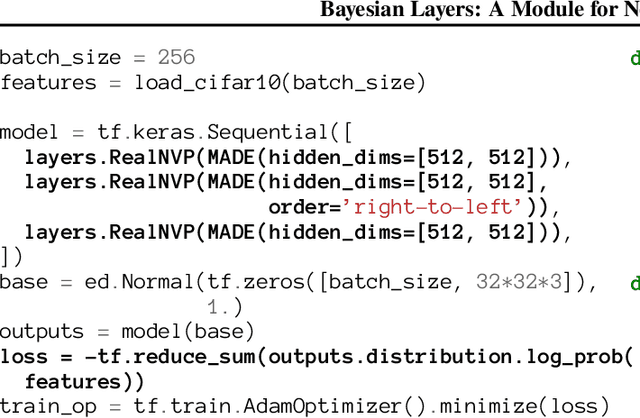

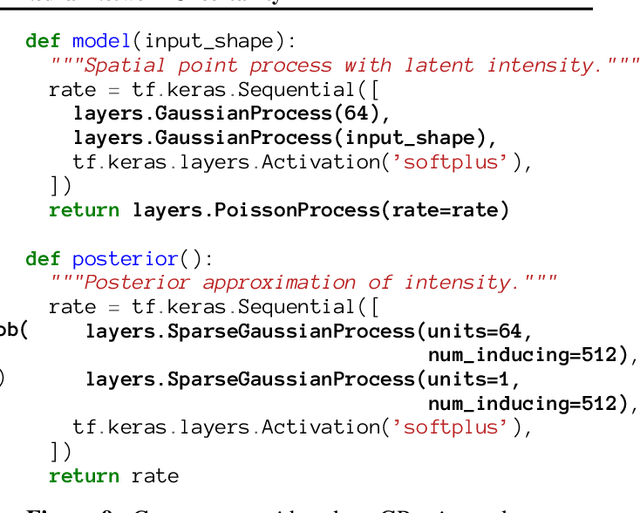

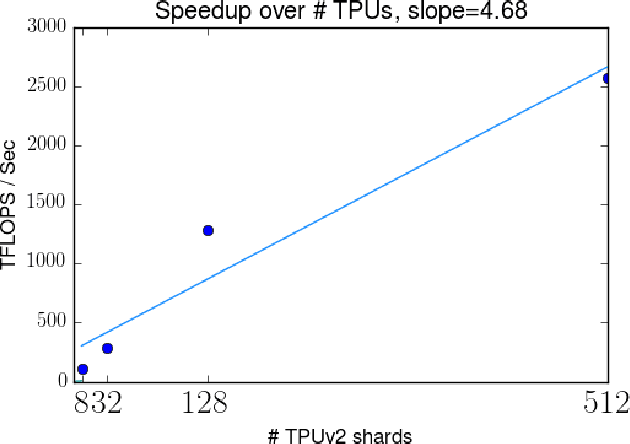

We describe Bayesian Layers, a module designed for fast experimentation with neural network uncertainty. It extends neural network libraries with layers capturing uncertainty over weights (Bayesian neural nets), pre-activation units (dropout), activations ("stochastic output layers"), and the function itself (Gaussian processes). With reversible layers, one can also propagate uncertainty from input to output such as for flow-based distributions and constant-memory backpropagation. Bayesian Layers are a drop-in replacement for other layers, maintaining core features that one typically desires for experimentation. As demonstration, we fit a 10-billion parameter "Bayesian Transformer" on 512 TPUv2 cores, which replaces attention layers with their Bayesian counterpart.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge