Back to Prior Knowledge: Joint Event Causality Extraction via Convolutional Semantic Infusion

Paper and Code

Feb 19, 2021

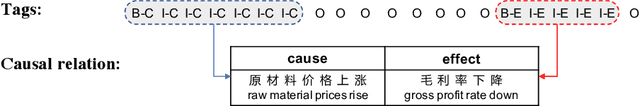

Joint event and causality extraction is a challenging yet essential task in information retrieval and data mining. Recently, pre-trained language models (e.g., BERT) yield state-of-the-art results and dominate in a variety of NLP tasks. However, these models are incapable of imposing external knowledge in domain-specific extraction. Considering the prior knowledge of frequent n-grams that represent cause/effect events may benefit both event and causality extraction, in this paper, we propose convolutional knowledge infusion for frequent n-grams with different windows of length within a joint extraction framework. Knowledge infusion during convolutional filter initialization not only helps the model capture both intra-event (i.e., features in an event cluster) and inter-event (i.e., associations across event clusters) features but also boosts training convergence. Experimental results on the benchmark datasets show that our model significantly outperforms the strong BERT+CSNN baseline.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge