Autonomous Visual Rendering using Physical Motion

Paper and Code

Sep 08, 2017

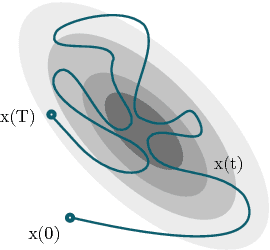

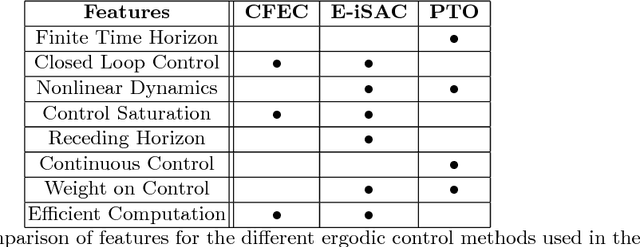

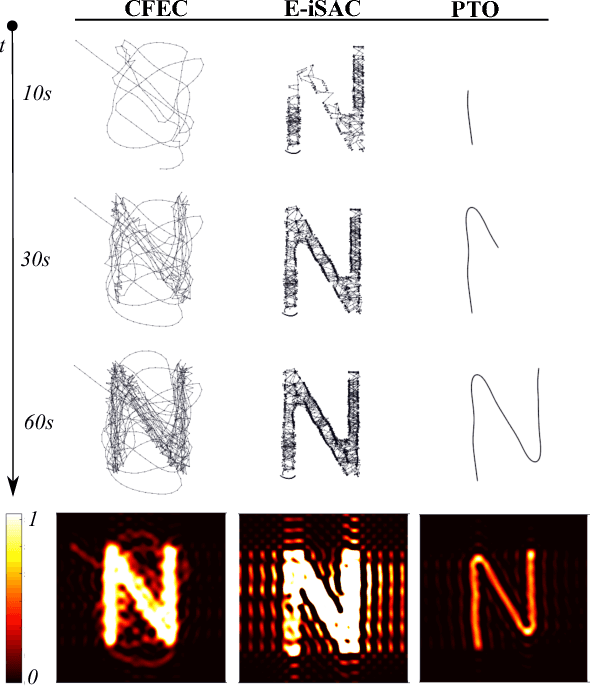

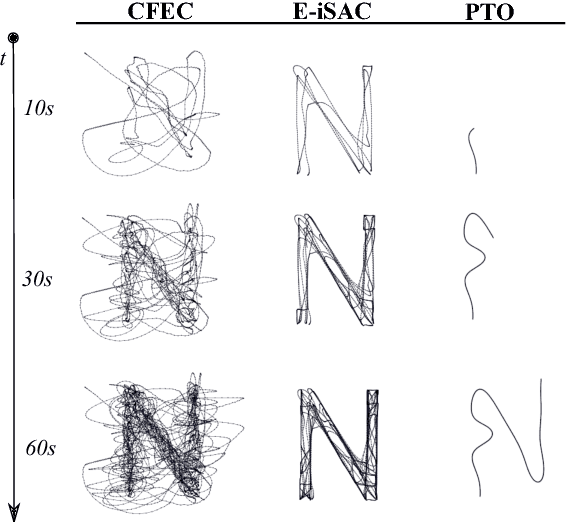

This paper addresses the problem of enabling a robot to represent and recreate visual information through physical motion, focusing on drawing using pens, brushes, or other tools. This work uses ergodicity as a control objective that translates planar visual input to physical motion without preprocessing (e.g., image processing, motion primitives). % or human-generated training data (i.e., machine learning). We achieve comparable results to existing drawing methods, while reducing the algorithmic complexity of the software. We demonstrate that optimal ergodic control algorithms with different time-horizon characteristics (infinitesimal, finite, and receding horizon) can generate qualitatively and stylistically different motions that render a wide range of visual information (e.g., letters, portraits, landscapes). In addition, we show that ergodic control enables the same software design to apply to multiple robotic systems by incorporating their particular dynamics, thereby reducing the dependence on task-specific robots. Finally, we demonstrate physical drawings with the Baxter robot.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge