Automatic Testing and Falsification with Dynamically Constrained Reinforcement Learning

Paper and Code

Oct 30, 2019

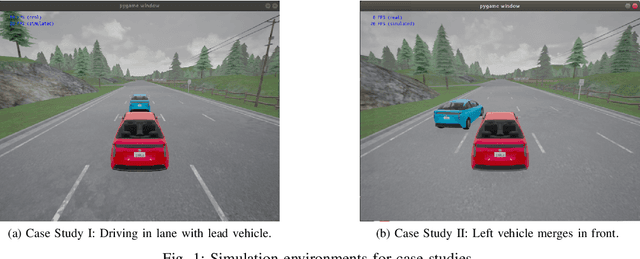

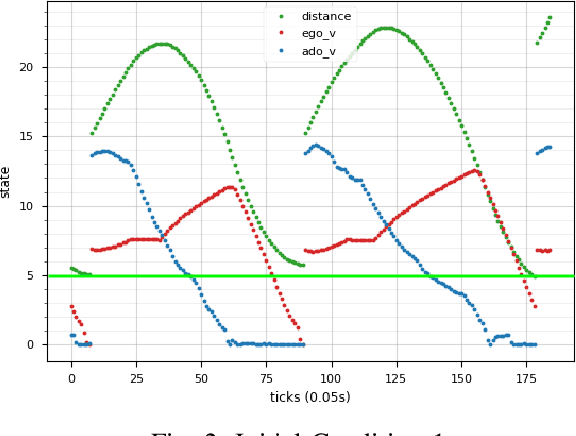

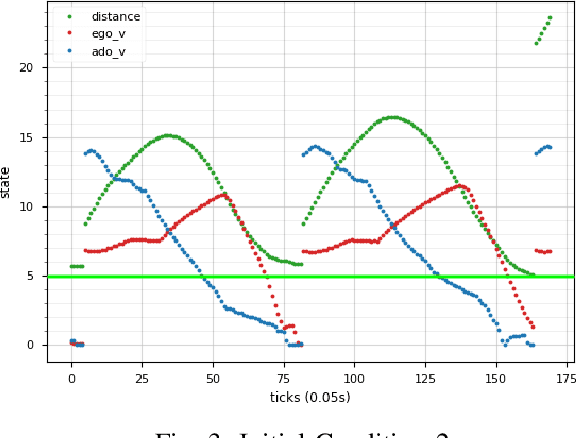

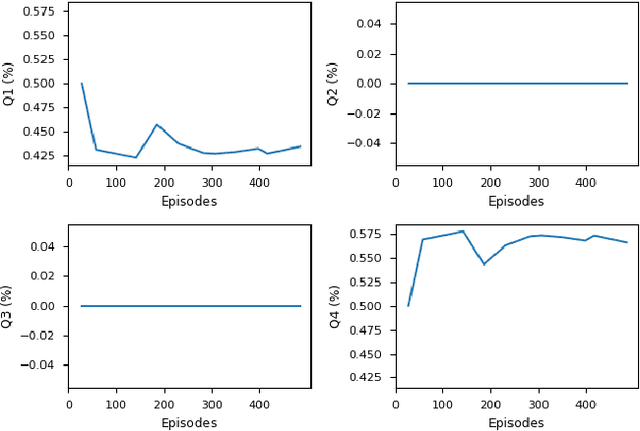

We consider the problem of using reinforcement learning to train adversarial agents for automatic testing and falsification of cyberphysical systems, such as autonomous vehicles, robots, and airplanes. In order to produce useful agents, however, it is useful to be able to control the degree of adversariality by specifying rules that an agent must follow. For example, when testing an autonomous vehicle, it is useful to find maximally antagonistic traffic participants that obey traffic rules. We model dynamic constraints as hierarchically ordered rules expressed in Signal Temporal Logic, and show how these can be incorporated into an agent training process. We prove that our agent-centric approach is able to find all dangerous behaviors that can be found by traditional falsification techniques while producing modular and reusable agents. We demonstrate our approach on two case studies from the automotive domain.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge