Automatic Reward Design via Learning Motivation-Consistent Intrinsic Rewards

Paper and Code

Jul 29, 2022

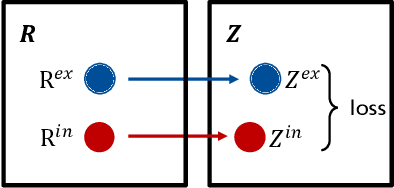

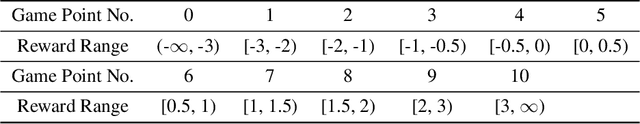

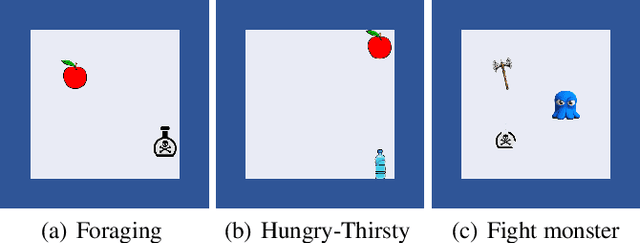

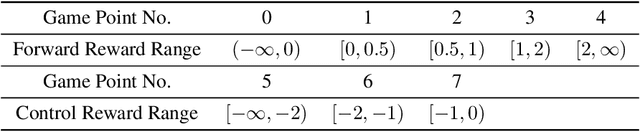

Reward design is a critical part of the application of reinforcement learning, the performance of which strongly depends on how well the reward signal frames the goal of the designer and how well the signal assesses progress in reaching that goal. In many cases, the extrinsic rewards provided by the environment (e.g., win or loss of a game) are very sparse and make it difficult to train agents directly. Researchers usually assist the learning of agents by adding some auxiliary rewards in practice. However, designing auxiliary rewards is often turned to a trial-and-error search for reward settings that produces acceptable results. In this paper, we propose to automatically generate goal-consistent intrinsic rewards for the agent to learn, by maximizing which the expected accumulative extrinsic rewards can be maximized. To this end, we introduce the concept of motivation which captures the underlying goal of maximizing certain rewards and propose the motivation based reward design method. The basic idea is to shape the intrinsic rewards by minimizing the distance between the intrinsic and extrinsic motivations. We conduct extensive experiments and show that our method performs better than the state-of-the-art methods in handling problems of delayed reward, exploration, and credit assignment.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge