Automatic Encoding and Repair of Reactive High-Level Tasks with Learned Abstract Representations

Paper and Code

Apr 18, 2022

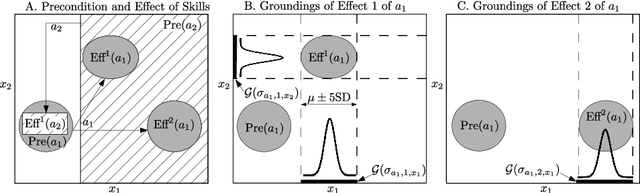

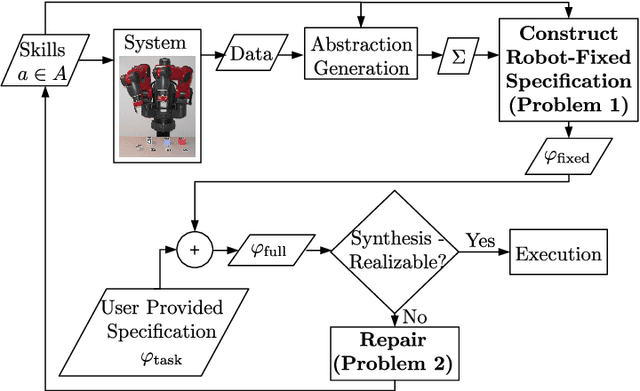

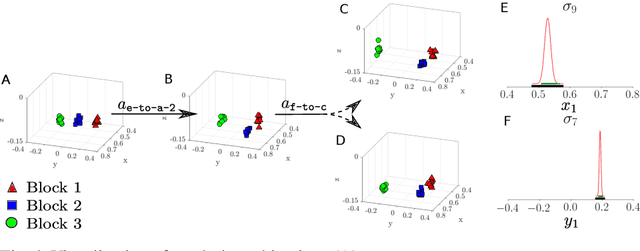

We present a framework that, given a set of skills a robot can perform, abstracts sensor data into symbols that we use to automatically encode the robot's capabilities in Linear Temporal Logic. We specify reactive high-level tasks based on these capabilities, for which a strategy is automatically synthesized and executed on the robot, if the task is feasible. If a task is not feasible given the robot's capabilities, we present two methods, one enumeration-based and one synthesis-based, for automatically suggesting additional skills for the robot or modifications to existing skills that would make the task feasible. We demonstrate our framework on a Baxter robot manipulating blocks on a table, a Baxter robot manipulating plates on a table, and a Kinova arm manipulating vials, with multiple sensor modalities, including raw images.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge