AuditWen:An Open-Source Large Language Model for Audit

Paper and Code

Oct 09, 2024

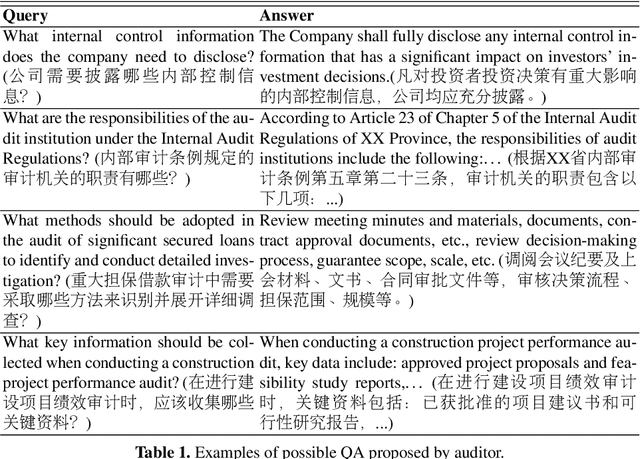

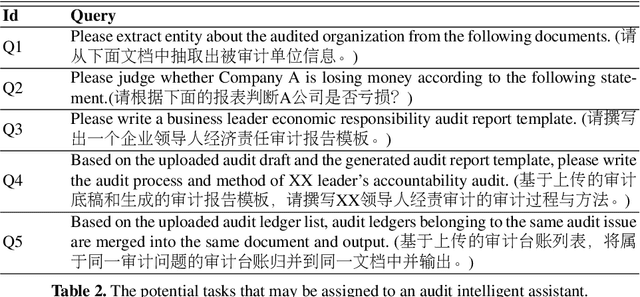

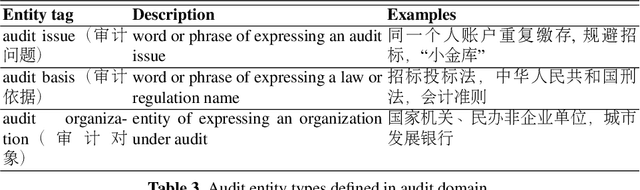

Intelligent auditing represents a crucial advancement in modern audit practices, enhancing both the quality and efficiency of audits within the realm of artificial intelligence. With the rise of large language model (LLM), there is enormous potential for intelligent models to contribute to audit domain. However, general LLMs applied in audit domain face the challenges of lacking specialized knowledge and the presence of data biases. To overcome these challenges, this study introduces AuditWen, an open-source audit LLM by fine-tuning Qwen with constructing instruction data from audit domain. We first outline the application scenarios for LLMs in the audit and extract requirements that shape the development of LLMs tailored for audit purposes. We then propose an audit LLM, called AuditWen, by fine-tuning Qwen with constructing 28k instruction dataset from 15 audit tasks and 3 layers. In evaluation stage, we proposed a benchmark with 3k instructions that covers a set of critical audit tasks derived from the application scenarios. With the benchmark, we compare AuditWen with other existing LLMs from information extraction, question answering and document generation. The experimental results demonstrate superior performance of AuditWen both in question understanding and answer generation, making it an immediately valuable tool for audit.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge