Attention Mechanisms Don't Learn Additive Models: Rethinking Feature Importance for Transformers

Paper and Code

May 22, 2024

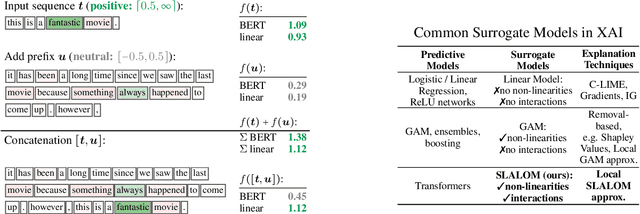

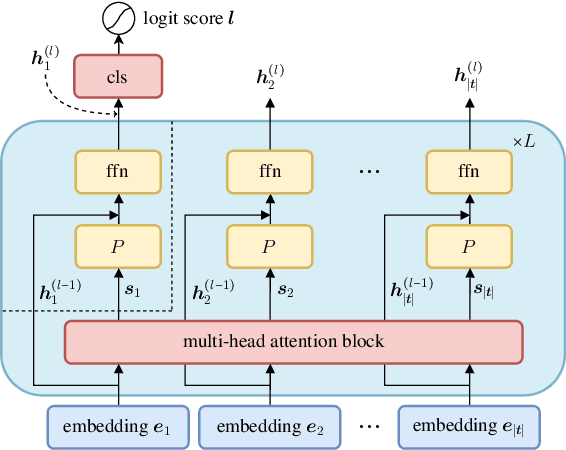

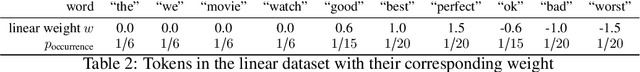

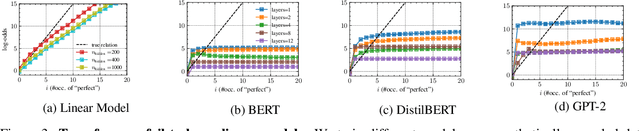

We address the critical challenge of applying feature attribution methods to the transformer architecture, which dominates current applications in natural language processing and beyond. Traditional attribution methods to explainable AI (XAI) explicitly or implicitly rely on linear or additive surrogate models to quantify the impact of input features on a model's output. In this work, we formally prove an alarming incompatibility: transformers are structurally incapable to align with popular surrogate models for feature attribution, undermining the grounding of these conventional explanation methodologies. To address this discrepancy, we introduce the Softmax-Linked Additive Log-Odds Model (SLALOM), a novel surrogate model specifically designed to align with the transformer framework. Unlike existing methods, SLALOM demonstrates the capacity to deliver a range of faithful and insightful explanations across both synthetic and real-world datasets. Showing that diverse explanations computed from SLALOM outperform common surrogate explanations on different tasks, we highlight the need for task-specific feature attributions rather than a one-size-fits-all approach.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge