Attention! A Lightweight 2D Hand Pose Estimation Approach

Paper and Code

Jan 22, 2020

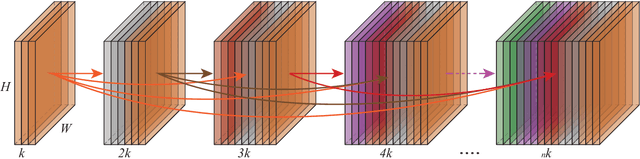

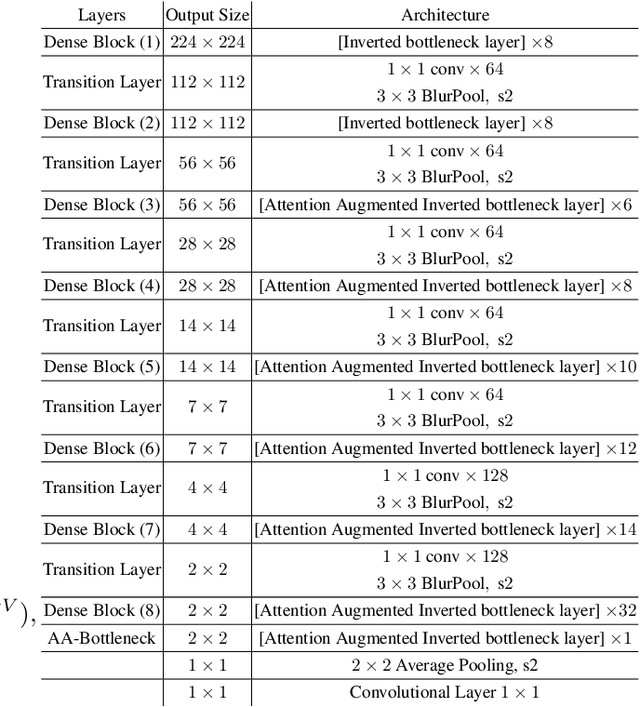

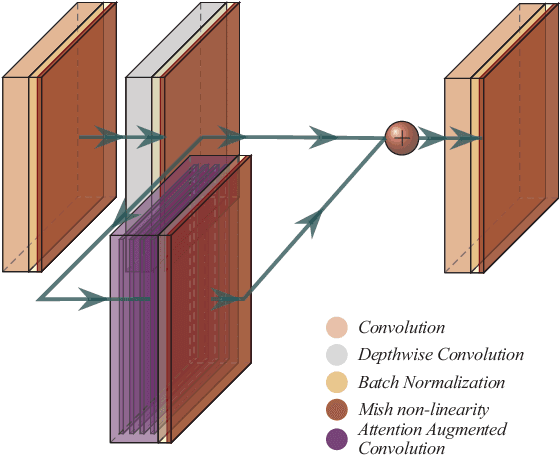

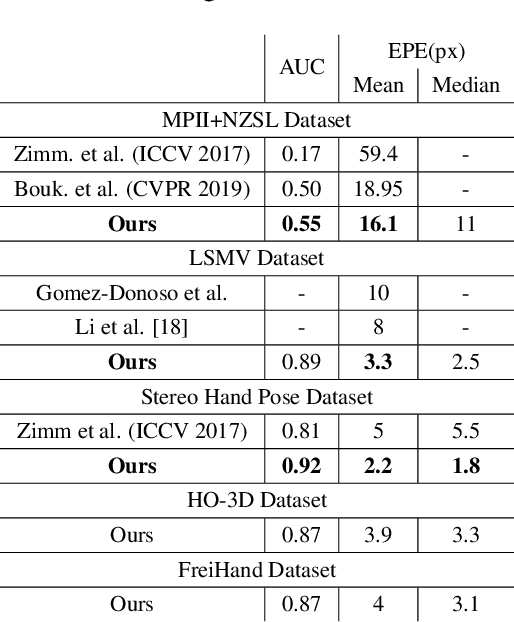

Vision based human pose estimation is an non-invasive technology for Human-Computer Interaction (HCI). Direct use of the hand as an input device provides an attractive interaction method, with no need for specialized sensing equipment, such as exoskeletons, gloves etc, but a camera. Traditionally, HCI is employed in various applications spreading in areas including manufacturing, surgery, entertainment industry and architecture, to mention a few. Deployment of vision based human pose estimation algorithms can give a breath of innovation to these applications. In this letter, we present a novel Convolutional Neural Network architecture, reinforced with a Self-Attention module that it can be deployed on an embedded system, due to its lightweight nature, with just 1.9 Million parameters. The source code and qualitative results are publicly available.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge