Asymptotically Optimal One- and Two-Sample Testing with Kernels

Paper and Code

Aug 27, 2019

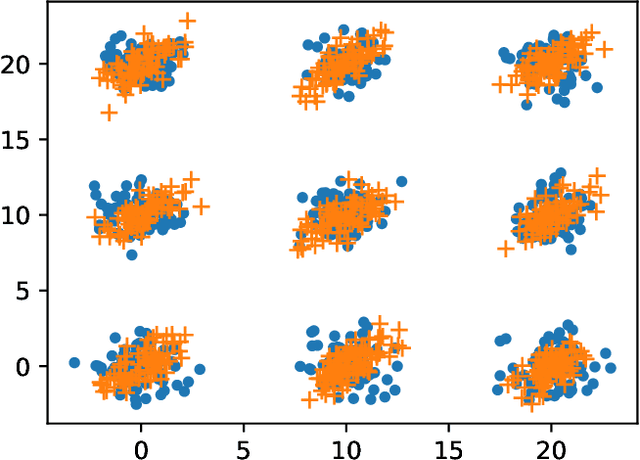

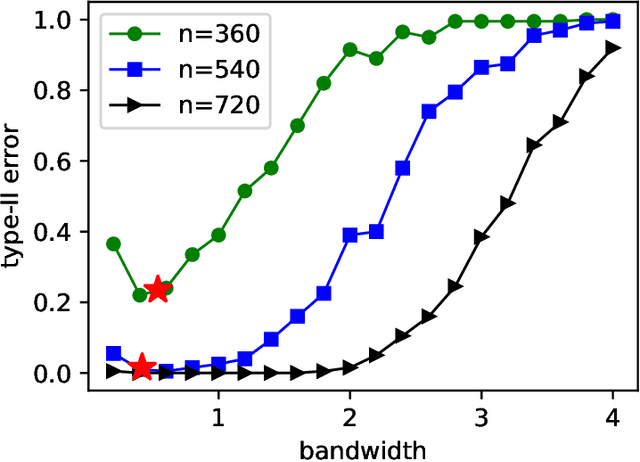

We characterize the asymptotic performance of nonparametric one- and two-sample testing. The exponential decay rate or error exponent of the type-II error probability is used as the asymptotic performance metric, and an optimal test achieves the maximum rate subject to a constant level constraint on the type-I error probability. With Sanov's theorem, we derive a sufficient condition for one-sample tests to achieve the optimal error exponent in the universal setting, i.e., for any distribution defining the alternative hypothesis. We then show that two classes of Maximum Mean Discrepancy (MMD) based tests attain the optimal type-II error exponent on $\mathbb R^d$, while the quadratic-time Kernel Stein Discrepancy (KSD) based tests achieve this optimality with an asymptotic level constraint. For general two-sample testing, however, Sanov's theorem is insufficient to obtain a similar sufficient condition. We proceed to establish an extended version of Sanov's theorem and derive an exact error exponent for the quadratic-time MMD based two-sample tests. The obtained error exponent is further shown to be optimal among all two-sample tests satisfying a given level constraint. Our results not only solve a long-standing open problem in information theory and statistics, but also provide an achievability result for optimal nonparametric one- and two-sample testing. Application to off-line change detection and related issues are also discussed.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge