ARock: an Algorithmic Framework for Asynchronous Parallel Coordinate Updates

Paper and Code

May 27, 2016

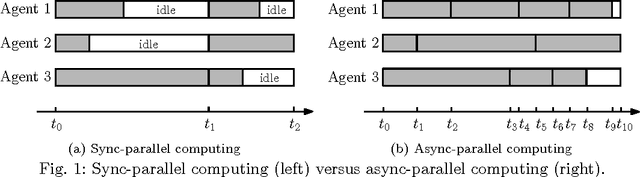

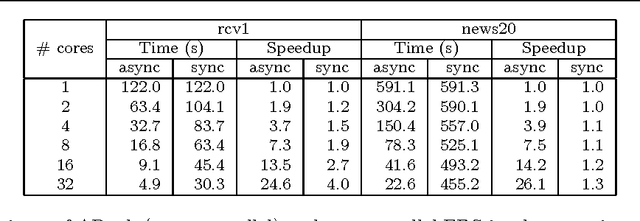

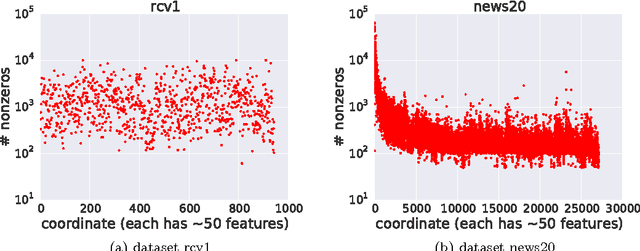

Finding a fixed point to a nonexpansive operator, i.e., $x^*=Tx^*$, abstracts many problems in numerical linear algebra, optimization, and other areas of scientific computing. To solve fixed-point problems, we propose ARock, an algorithmic framework in which multiple agents (machines, processors, or cores) update $x$ in an asynchronous parallel fashion. Asynchrony is crucial to parallel computing since it reduces synchronization wait, relaxes communication bottleneck, and thus speeds up computing significantly. At each step of ARock, an agent updates a randomly selected coordinate $x_i$ based on possibly out-of-date information on $x$. The agents share $x$ through either global memory or communication. If writing $x_i$ is atomic, the agents can read and write $x$ without memory locks. Theoretically, we show that if the nonexpansive operator $T$ has a fixed point, then with probability one, ARock generates a sequence that converges to a fixed points of $T$. Our conditions on $T$ and step sizes are weaker than comparable work. Linear convergence is also obtained. We propose special cases of ARock for linear systems, convex optimization, machine learning, as well as distributed and decentralized consensus problems. Numerical experiments of solving sparse logistic regression problems are presented.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge