Anonymization for Skeleton Action Recognition

Paper and Code

Nov 30, 2021

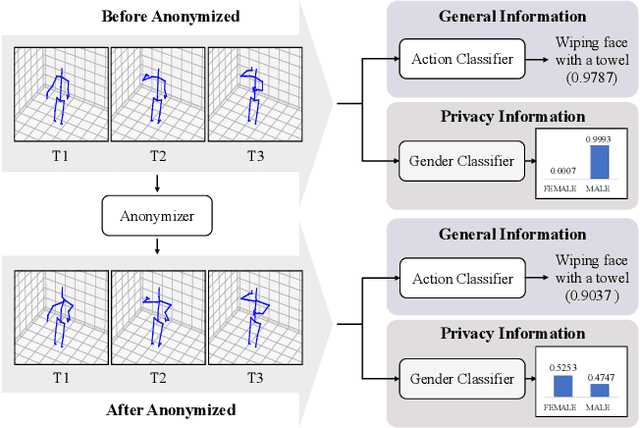

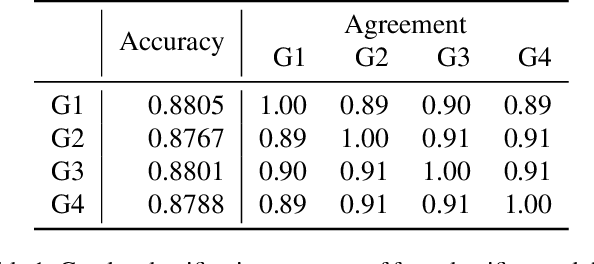

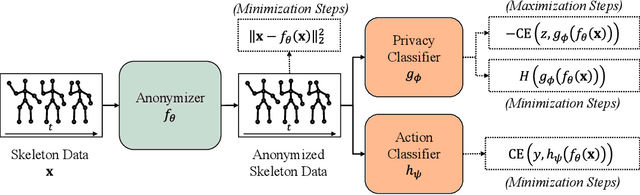

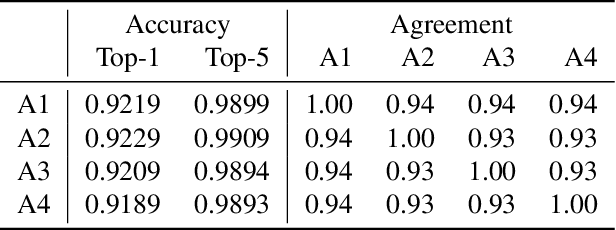

The skeleton-based action recognition attracts practitioners and researchers due to the lightweight, compact nature of datasets. Compared with RGB-video-based action recognition, skeleton-based action recognition is a safer way to protect the privacy of subjects while having competitive recognition performance. However, due to the improvements of skeleton estimation algorithms as well as motion- and depth-sensors, more details of motion characteristics can be preserved in the skeleton dataset, leading to a potential privacy leakage from the dataset. To investigate the potential privacy leakage from the skeleton datasets, we first train a classifier to categorize sensitive private information from a trajectory of joints. Experiments show the model trained to classify gender can predict with 88% accuracy and re-identify a person with 82% accuracy. We propose two variants of anonymization algorithms to protect the potential privacy leakage from the skeleton dataset. Experimental results show that the anonymized dataset can reduce the risk of privacy leakage while having marginal effects on the action recognition performance.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge