An Empirical Study of Domain Adaptation for Unsupervised Neural Machine Translation

Paper and Code

Aug 26, 2019

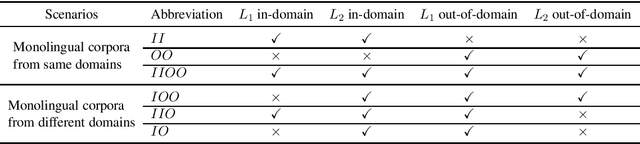

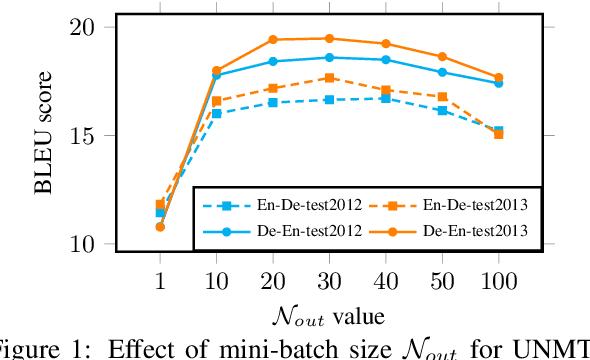

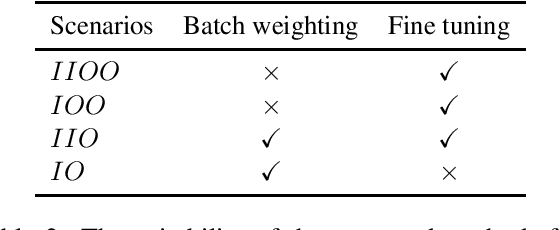

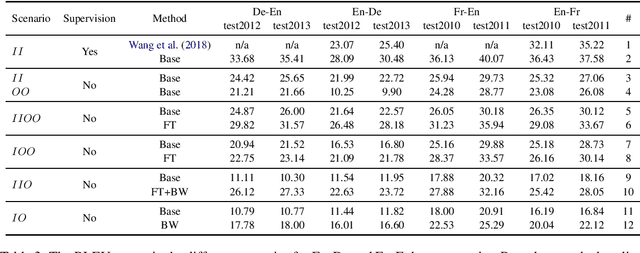

Domain adaptation methods have been well-studied in supervised neural machine translation (NMT). However, domain adaptation methods for unsupervised neural machine translation (UNMT) have not been well-studied although UNMT has recently achieved remarkable results in some specific domains for several language pairs. Besides the inconsistent domains between training data and test data for supervised NMT, there sometimes exists an inconsistent domain between two monolingual training data for UNMT. In this work, we empirically show different scenarios for unsupervised domain-specific neural machine translation. Based on these scenarios, we propose several potential solutions to improve the performances of domain-specific UNMT systems.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge