An Empirical Study of DNNs Robustification Inefficacy in Protecting Visual Recommenders

Paper and Code

Oct 02, 2020

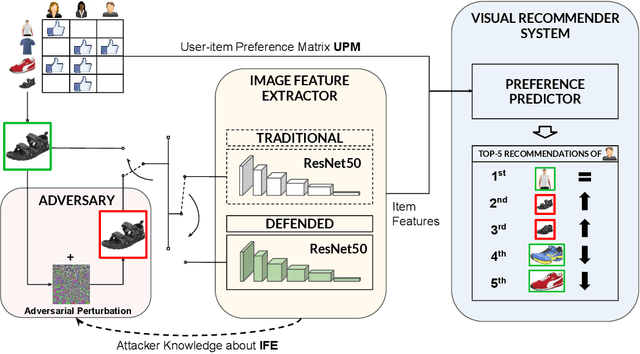

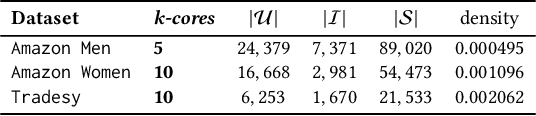

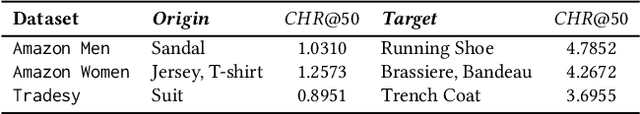

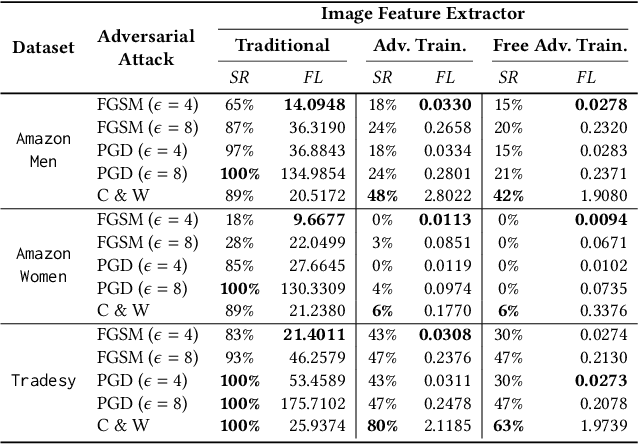

Visual-based recommender systems (VRSs) enhance recommendation performance by integrating users' feedback with the visual features of product images extracted from a deep neural network (DNN). Recently, human-imperceptible images perturbations, defined \textit{adversarial attacks}, have been demonstrated to alter the VRSs recommendation performance, e.g., pushing/nuking category of products. However, since adversarial training techniques have proven to successfully robustify DNNs in preserving classification accuracy, to the best of our knowledge, two important questions have not been investigated yet: 1) How well can these defensive mechanisms protect the VRSs performance? 2) What are the reasons behind ineffective/effective defenses? To answer these questions, we define a set of defense and attack settings, as well as recommender models, to empirically investigate the efficacy of defensive mechanisms. The results indicate alarming risks in protecting a VRS through the DNN robustification. Our experiments shed light on the importance of visual features in very effective attack scenarios. Given the financial impact of VRSs on many companies, we believe this work might rise the need to investigate how to successfully protect visual-based recommenders. Source code and data are available at https://anonymous.4open.science/r/868f87ca-c8a4-41ba-9af9-20c41de33029/.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge